AI Governance for New Zealand Technology Companies

Your AI product works. But the government agency evaluating your tender wants governance documentation. The enterprise prospect needs Privacy Act assurance before signing. The EU distributor requires AI Act compliance evidence. Governance is the gap between a good product and a signed contract.

Three deals you are losing right now

NZ tech companies build excellent AI products. Then governance gaps stall the contracts that matter most.

Government tenders that require governance evidence

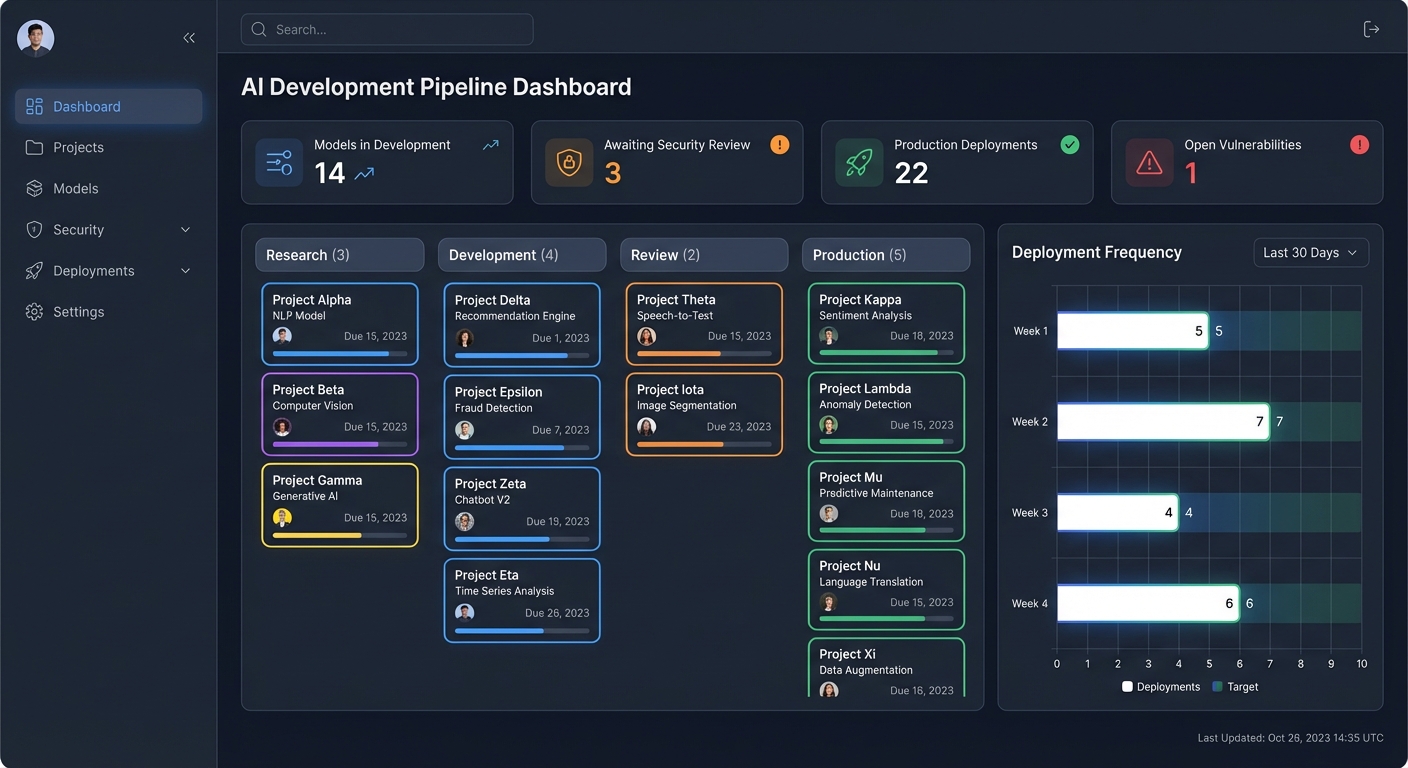

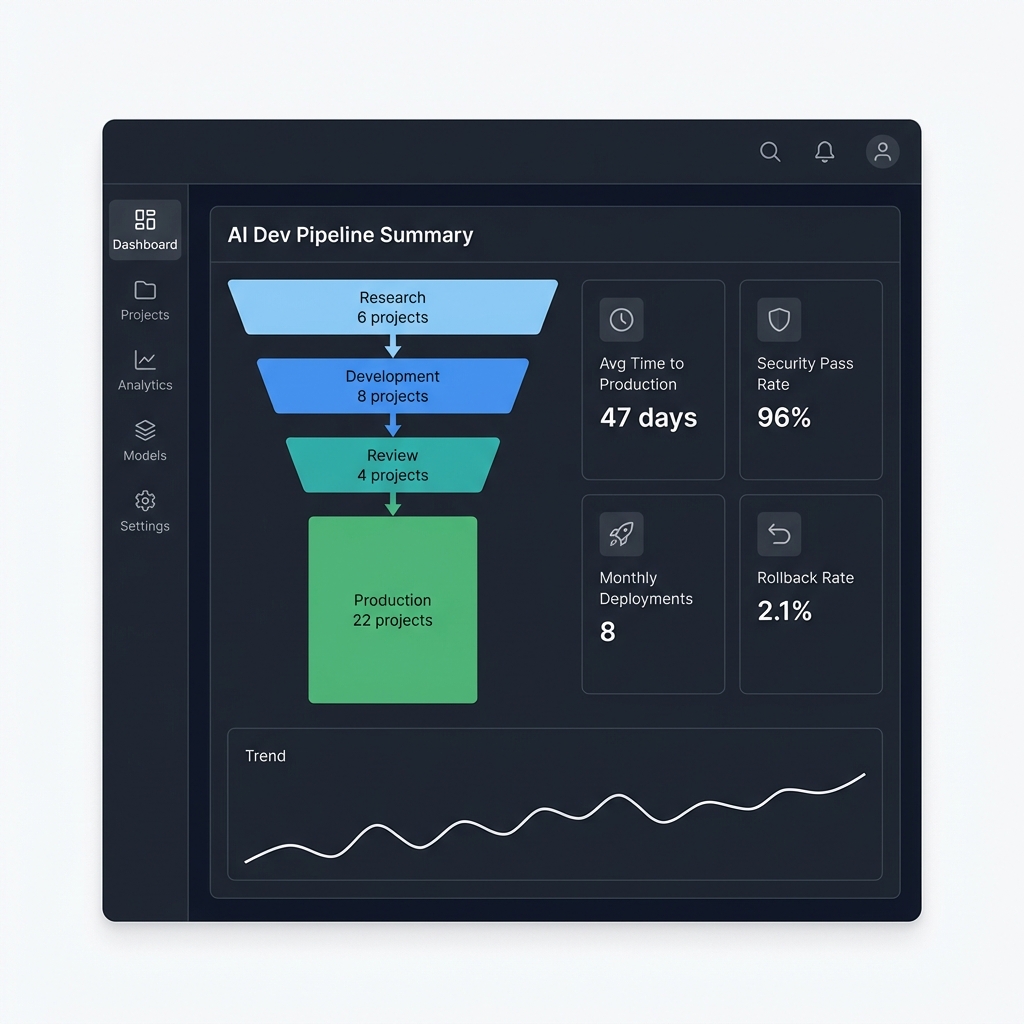

NZ government agencies now evaluate AI governance as part of procurement. The Public Service AI Framework sets expectations for risk assessment, data traceability, and supplier evaluation. Without documented governance, your tender response has a gap your competitors may not.

International expansion blocked by compliance gaps

Selling into the EU means AI Act compliance. US state laws add another layer. Enterprise customers in any market expect governance documentation as standard. NZ tech companies expanding offshore hit compliance walls they did not anticipate.

Enterprise clients demanding ISO 42001 or equivalent

Large organisations increasingly require formal AI governance assurance from their technology vendors. ISO 42001 certification -- or at minimum, demonstrable alignment -- is becoming a procurement checkbox. Without it, you are competing on product alone while others compete on product plus trust.

Governance embedded in your product, not bolted on

For technology companies, governance is not a separate workstream. It is a product feature that your buyers evaluate.

Product-Embedded Governance

Controls built into your development lifecycle

Your customers do not want to read a governance PDF. They want to see governance reflected in how your product handles data, makes decisions, and provides transparency. We help you embed governance into the product itself.

What this looks like:

- Model cards and transparency documentation for each AI feature

- Bias detection and mitigation integrated into CI/CD pipelines

- Data lineage tracking from training data through to inference

- Explainability features that customers can surface to their own users

- Audit trails that satisfy your customers' own compliance requirements

Enterprise Sales Enablement

Governance documentation that closes deals

Enterprise procurement teams send AI governance questionnaires. Government agencies require evidence of responsible AI practices. Your sales team needs governance materials that are ready before the RFP lands, not scrambled together after.

What you get:

- Pre-built responses for common AI governance questionnaires

- Government tender governance evidence packages

- Customer-facing AI ethics and transparency documentation

- Third-party risk assessment materials for vendor evaluation

- ISO 42001 alignment evidence for certification-conscious buyers

The compliance landscape NZ tech companies navigate

No single AI law in New Zealand. But multiple overlapping frameworks apply to AI products, and your customers expect you to know which ones matter.

Privacy Act 2020

Your product's data handling foundation

- 13 Privacy Principles apply to every AI feature processing personal information

- Purpose limitation constrains how you use customer data for model training

- Mandatory data breach notification to the Privacy Commissioner within 72 hours

- Cross-border data transfer restrictions affect offshore model hosting

ISO 42001 Certification

The competitive differentiator

- International standard specifically for AI management systems

- Recognised by enterprise procurement teams globally

- Few NZ tech companies certified -- early movers gain significant advantage

- Available through Standards New Zealand accredited certification bodies

EU AI Act

Required for European market access

- Applies extraterritorially to NZ companies with EU users or customers

- High-risk AI systems face mandatory compliance requirements from August 2026

- Risk classification determines documentation and testing obligations

- Penalties scale to global turnover -- not just EU revenue

NZ Government Procurement

Public Service AI Framework requirements

- Agencies evaluate AI supplier governance as part of procurement decisions

- Risk assessment, data traceability, and exit strategies expected from vendors

- Treaty of Waitangi obligations extend to AI systems in government use

- Vendors with governance documentation have a measurable procurement advantage

Built for Auckland's Tech Hub and Beyond

Over 60% of New Zealand's technology companies are based in Auckland. From SaaS providers in Wynyard Quarter to AI startups in GridAKL, the challenge is the same: governance frameworks that match the pace of product development without slowing it down.

SaaS Providers

B2B platforms adding AI features need Privacy Act compliance documentation and governance evidence to move upmarket into enterprise accounts. Governance becomes the unlock for larger deal sizes.

AI and ML Startups

Companies where AI is the core product face the most scrutiny. Investors ask about governance during due diligence. Enterprise customers require it before procurement. Building governance early costs less than retrofitting later.

Tech Consultancies and Digital Agencies

Building AI solutions for clients means your governance practices directly affect their compliance posture. Demonstrating governance maturity differentiates your consulting practice from competitors who treat AI as purely a technical delivery.

What we deliver for technology companies

Practical governance outputs that serve double duty: compliance protection and sales acceleration.

Privacy Act Compliance for AI Products

- • Privacy Impact Assessments for each AI feature

- • Data processing documentation for training and inference

- • Cross-border transfer risk assessments for offshore hosting

- • Consent mechanisms and transparency notices for end users

ISO 42001 Readiness Assessment

- • Gap analysis against ISO 42001 requirements

- • AI management system design and documentation

- • Risk treatment plans and control implementation

- • Internal audit preparation and certification pathway

Enterprise Governance Documentation Package

- • AI governance policy suite for customer-facing use

- • Model cards and system documentation templates

- • Procurement questionnaire response library

- • Vendor risk assessment self-service materials

Government Tender Governance Evidence

- • Public Service AI Framework alignment documentation

- • Risk assessment and data traceability evidence

- • Exit strategy and data portability commitments

- • Cultural impact considerations for government AI use

Bias Detection and Ethical AI Framework

- • Fairness testing methodology for your AI models

- • Bias monitoring and mitigation procedures

- • Ethical AI principles aligned with OECD guidelines

- • Incident response procedures for AI failures

International Market Compliance

- • EU AI Act risk classification and compliance roadmap

- • US state AI law mapping for American market entry

- • Multi-jurisdiction governance framework design

- • Market-specific documentation and compliance evidence

Questions NZ tech companies ask us

We are a 20-person startup. Is governance realistic at our stage?

Yes. Early-stage governance is lighter than you expect. A startup does not need the same framework as a bank. We build governance that matches your current size and scales with you -- typically starting with Privacy Act compliance for your AI product, a basic AI ethics policy, and documentation that satisfies enterprise procurement questions. Most startups complete the initial framework in 4-6 weeks alongside normal product development.

How does ISO 42001 certification help us win deals?

ISO 42001 is the international standard for AI management systems. Enterprise procurement teams recognise it as third-party validation that your AI practices meet a defined benchmark. In competitive evaluations, certification -- or documented alignment with the standard -- can be the factor that moves you past shortlisting. Very few NZ tech companies hold this certification today, so early movers gain differentiation that erodes over time as adoption increases.

How does the Privacy Act 2020 apply to our SaaS AI features?

If your AI features process personal information -- customer data, user behaviour, employee records -- the 13 Privacy Principles apply. Key areas for SaaS companies: purpose limitation (can you use customer data to train your models?), accuracy (are your AI outputs reliable enough to act on?), disclosure (do users know AI is making decisions about them?), and cross-border transfers (where are your models hosted and where does data flow?). We map each AI feature against the relevant principles and build compliance into your product architecture.

What do NZ government agencies look for in AI vendor governance?

The Public Service AI Framework (February 2025) sets expectations for government agencies procuring AI. Agencies evaluate vendors on: risk assessment documentation, data traceability (where training data comes from and how it flows), exit strategies (what happens if the contract ends), security practices, and cultural considerations including Treaty of Waitangi obligations. We help you prepare governance evidence that directly addresses these evaluation criteria before tenders open.

We are expanding to the EU. What does the AI Act mean for us?

The EU AI Act applies extraterritorially. If your AI product has EU users or customers, compliance obligations kick in regardless of where your company is based. The first step is risk classification: most B2B SaaS AI features fall into limited or minimal risk categories, but some applications (HR decisions, credit scoring, biometric systems) qualify as high-risk with significantly more demanding requirements. High-risk system compliance requirements apply from August 2026. We assess your product against the risk classification framework and build a compliance roadmap specific to your EU market entry plans.

How long does it take to get governance-ready for enterprise sales?

For most NZ tech companies, the initial governance documentation package takes 6-8 weeks. This covers Privacy Act compliance assessment, core AI governance policies, procurement questionnaire responses, and customer-facing transparency documentation. ISO 42001 readiness adds another 3-4 months depending on existing maturity. Full ISO 42001 certification typically takes 6-12 months from starting the readiness process. We recommend starting with the enterprise documentation package to unblock immediate deals while pursuing certification in parallel.

AI Governance for NZ Technology Companies

Book a 30-minute assessment to identify which governance gaps are costing you contracts. We will map your AI product against Privacy Act requirements, enterprise procurement expectations, and ISO 42001 readiness -- then build a practical plan to close the gaps.