AI Risk Framework Development for New Zealand

New Zealand has no AI-specific risk regulation. That does not mean risk does not exist -- it means the entire burden of identifying, classifying, and managing AI risk falls on your organisation. And under the Companies Act 1993, on your directors personally.

We build bespoke AI risk frameworks grounded in NZ law -- Privacy Act 2020, Fair Trading Act, Companies Act director duties, and Treaty of Waitangi obligations -- so you are not guessing at what "reasonable care" looks like when something goes wrong.

The Voluntary Approach Has a Catch

Light-touch regulation sounds like freedom. In practice, it means every AI risk decision your organisation makes is a judgement call -- and if that judgement turns out to be wrong, there is no prescribed standard to point to as a defence.

Director Exposure Under Companies Act

Section 137 of the Companies Act 1993 requires directors to exercise reasonable care. If an AI system causes harm and the board cannot demonstrate it understood and managed the risks, directors face personal liability. Most boards have no AI risk framework to rely on.

Treaty Obligations Add Unique Complexity

AI systems that process data relating to Maori and Pacific communities carry risk dimensions that do not exist anywhere else. Data sovereignty, cultural safety, and Treaty of Waitangi obligations create risk categories that no off-the-shelf framework addresses.

Multiple Laws, No Unified View

Privacy Act 2020 covers personal information. Fair Trading Act covers misleading conduct. Companies Act covers director duties. Consumer Guarantees Act covers service quality. Your AI risks sit across all of them, but no single framework connects the dots.

When 25% of New Zealand organisations identify governance as the "missing link" in their AI adoption, it signals something important: the technology is moving faster than the risk management practices designed to contain it. In a principles-based regulatory environment, that gap belongs to you.

- PolyGovern analysis of NZ AI governance landscape, drawing on industry survey data (2025)

Built for a Market Without a Rulebook

Importing an overseas risk framework and bolting it onto your organisation does not work. New Zealand's regulatory landscape is different -- principles-based, light-touch, and shaped by obligations that are unique to Aotearoa. We build frameworks that reflect that reality rather than ignoring it.

Grounded In

NZ Regulatory Risk Mapping

We map every AI use case against the specific NZ legislation that applies: Privacy Act information privacy principles, Fair Trading Act misleading conduct provisions, Companies Act director duties, and sector-specific obligations from FMA or RBNZ. No generic checklists -- every risk is tied to an actual legal obligation.

AI Risk Taxonomy for Aotearoa

We develop a risk classification system that includes the categories generic frameworks miss: Maori data sovereignty risks, cultural safety impacts for Maori and Pacific populations, Treaty obligation breaches, vendor concentration risks from offshore AI providers, and algorithmic bias affecting communities that are already underserved.

Director Liability Assessment

We analyse your AI portfolio through the lens of Companies Act section 137 duties, identifying where directors are most exposed. The output is a clear liability map showing which AI systems carry the highest personal risk and what controls would demonstrate reasonable care and diligence.

Controls and Mitigations Design

For each identified risk, we design proportionate controls: Privacy Act compliance checkpoints, Fair Trading Act content review processes, cultural safety evaluation workflows, vendor dependency thresholds, and model performance boundaries. Controls are practical enough to actually implement, not theoretical.

Governance Integration and Reporting

We embed AI risk into your existing governance structures rather than layering on another committee. Board reporting templates translate AI risk into language directors can act on, with clear escalation triggers and decision rights tied to your organisational risk appetite.

What We Deliver

Every deliverable is built for New Zealand's legal and cultural context. Nothing is borrowed from another jurisdiction and relabelled.

NZ AI Risk Taxonomy

A classification system covering Privacy Act violations, Fair Trading Act breaches, Treaty obligation failures, data sovereignty risks, algorithmic bias against Maori and Pacific communities, and vendor concentration exposure. Delivered as a structured document and Excel file for GRC integration.

Privacy Act Risk Mapping

Detailed mapping of each AI system against the 13 Information Privacy Principles. Identifies where automated processing creates compliance exposure, what notifications are required, and where cross-border data flows raise sovereignty concerns.

Treaty Impact Assessment

Evaluation of how your AI systems affect Maori communities and Treaty of Waitangi obligations. Covers data sovereignty, cultural safety, equitable outcomes, and partnership principles. Designed for organisations in government, health, education, and financial services.

Director Liability Analysis

A board-ready analysis of personal liability exposure under Companies Act 1993 sections 131-138. Maps each AI system to director duties, identifies highest-risk scenarios, and provides the evidence trail directors need to demonstrate reasonable care.

Cultural Safety Risk Evaluation

Assessment of how AI systems affect Maori and Pacific populations specifically. Evaluates algorithmic bias, representational harm, language and cultural assumptions in training data, and equitable access to AI-driven services.

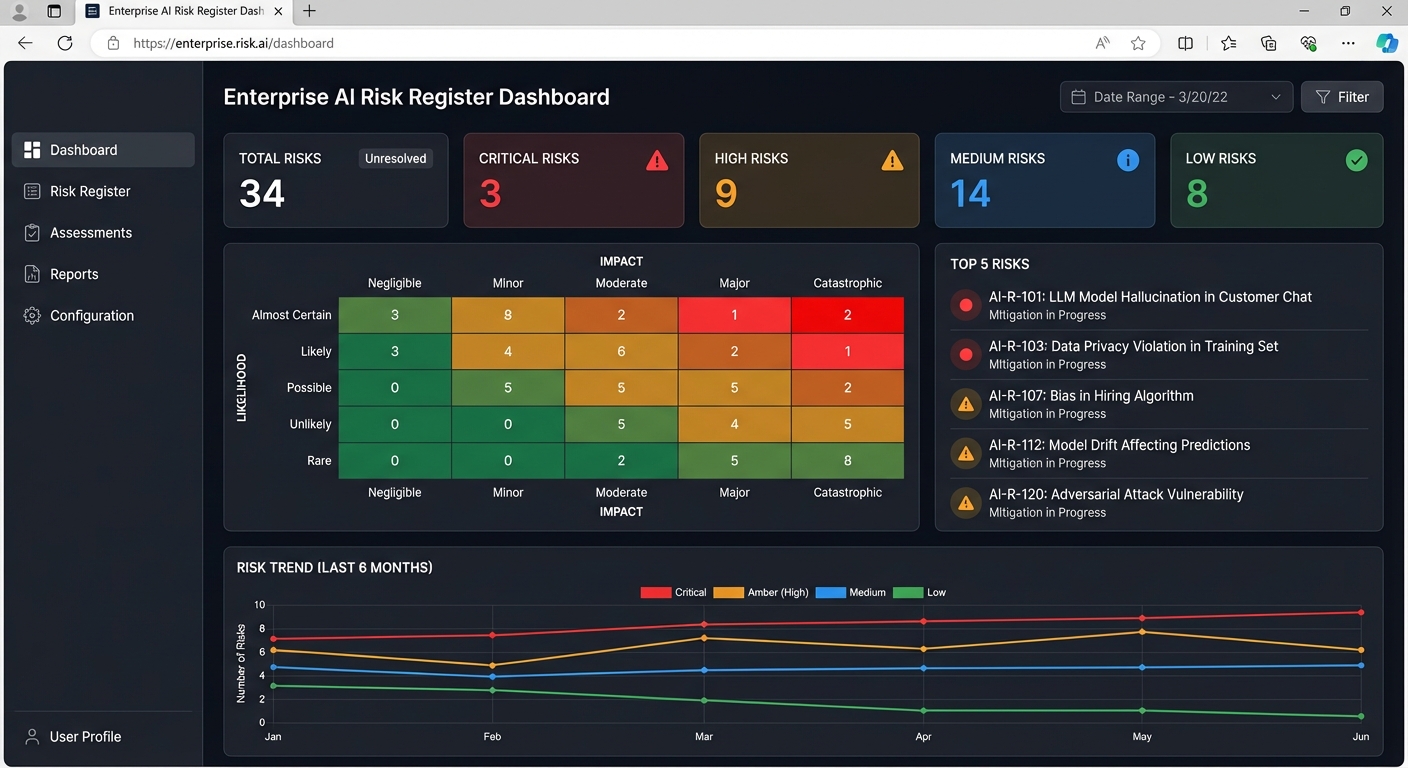

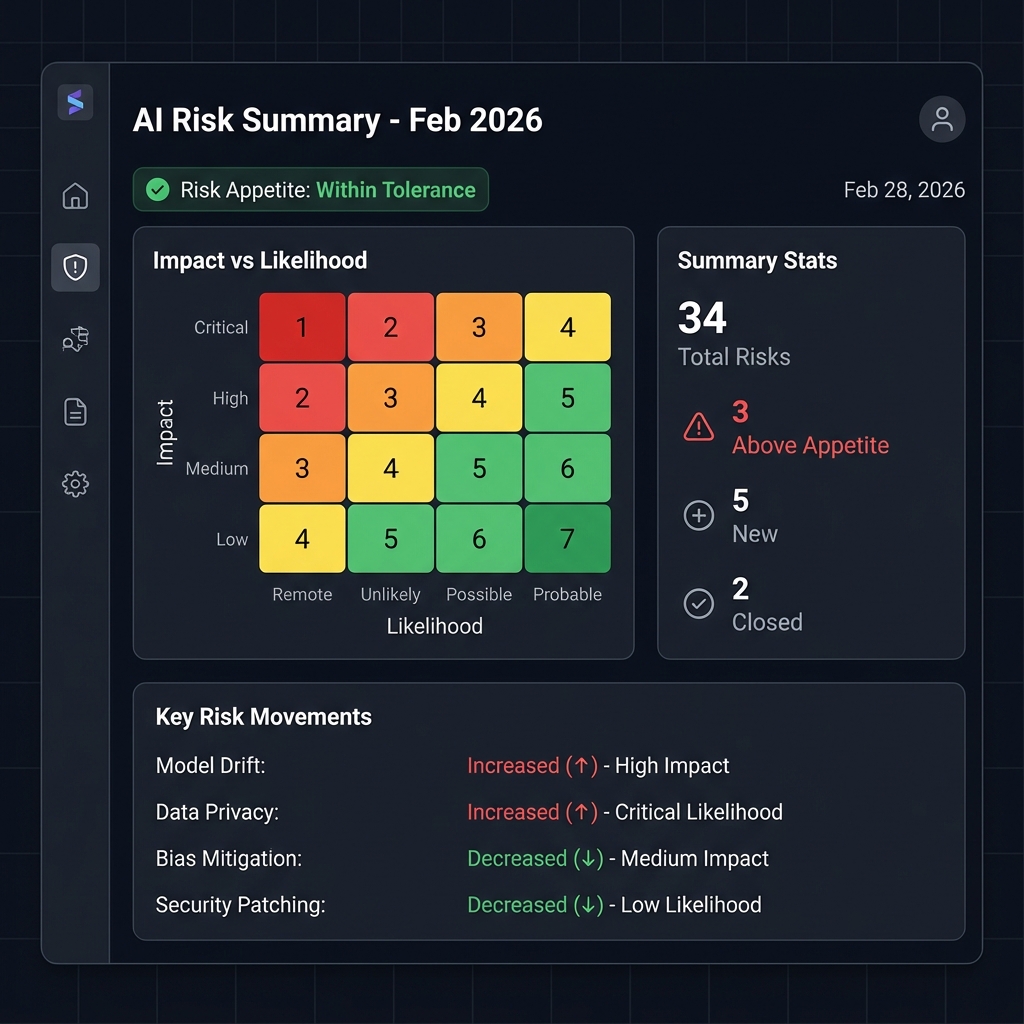

Risk Register and Controls Library

Pre-populated AI risk register with NZ-specific risks, control mappings, and assessment fields. Includes 50+ controls covering Fair Trading Act compliance checks, Privacy Act safeguards, cultural safety reviews, and vendor dependency management.

Who Needs This

If your organisation is deploying AI in New Zealand and nobody has asked "what could go wrong, and who is liable?" -- this is where you start.

Financial Services Under FMA/RBNZ

Banks, insurers, and fund managers who need to demonstrate they are managing AI risks within their existing regulatory obligations -- before the regulator asks.

Government and Public Sector

Agencies subject to the Public Service AI Framework that need to operationalise risk management for AI systems affecting New Zealanders, with particular attention to Treaty obligations.

Organisations Serving Maori and Pacific Communities

Health, education, and social service providers whose AI systems must account for cultural safety, data sovereignty, and equitable outcomes for underserved populations.

Directors and Board Members

Directors who want documented evidence that AI risks are being managed -- because under Companies Act 1993, "we didn't know" is not a viable defence.

Common Questions

What AI risks are unique to New Zealand?

Three categories stand out. First, Treaty of Waitangi obligations create risk dimensions around Maori data sovereignty, cultural safety, and equitable outcomes that exist nowhere else. Second, New Zealand's small market means heavy reliance on offshore AI vendors, creating concentration risks if a single provider fails or changes terms. Third, the absence of AI-specific regulation means your organisation bears full responsibility for defining "reasonable" risk management -- there is no prescribed standard to fall back on.

How do Treaty of Waitangi obligations affect AI risk?

The Treaty principles of partnership, participation, and protection apply to how AI systems collect, process, and make decisions about Maori. Risks include training data that underrepresents or misrepresents Maori, algorithms that produce inequitable outcomes for Maori and Pacific populations, and data sovereignty issues when Maori data is processed offshore without appropriate governance. Our framework includes specific risk categories and controls for Treaty compliance.

What does the FMA expect regarding AI risk management?

The FMA expects regulated entities to manage AI risks under their existing obligations -- conduct licensing, fair dealing, and client care duties. While there is no AI-specific rule, the FMA has signalled that entities using AI for financial advice, credit decisions, or customer interactions should demonstrate governance proportionate to the risk. Our frameworks align to these expectations and position you ahead of any future formalisation.

Can directors be personally liable for AI failures?

Yes. Under sections 131-138 of the Companies Act 1993, directors must act in good faith, in the best interests of the company, and with reasonable care, diligence, and skill. If an AI system causes significant harm -- privacy breaches, discriminatory outcomes, misleading content -- and the board cannot demonstrate it took reasonable steps to understand and manage those risks, individual directors face personal liability. A documented AI risk framework is the clearest evidence of due diligence.

How long does this take and what does it cost?

Most engagements run 8-14 weeks: regulatory mapping and discovery (2-3 weeks), taxonomy and assessment design (3-5 weeks), controls development and Treaty impact assessment (2-3 weeks), and integration and delivery (1-3 weeks). Pricing depends on the number of AI systems in scope and the regulatory complexity of your sector. We provide a fixed-price proposal after an initial scoping conversation.

Related Services

AI Governance Consulting

Full governance programme design covering operating models, committee structures, and decision-making frameworks for NZ organisations.

Learn more →AI Audit and Assessment

Independent evaluation of your current AI governance maturity, identifying gaps before they become incidents.

Learn more →ISO 42001 Certification

Achieve the international AI management system standard, providing structured proof of responsible AI governance.

Learn more →Build Your AI Risk Framework for New Zealand

In a market with no prescribed AI risk framework, the organisations that build their own set the standard. Talk to us about developing a risk framework that reflects NZ law, Treaty obligations, and the reality of how your organisation actually uses AI.