Make your AI systems comply with the Privacy Act 2020 before the Privacy Commissioner asks questions you can't answer

We help New Zealand organisations interpret the 13 Privacy Principles for AI systems, conduct Privacy Impact Assessments, and implement data handling procedures that satisfy regulators.

The challenge

Your AI systems collect, process, and make decisions using personal information, but the Privacy Act 2020 doesn't mention AI. You're interpreting principles written before generative AI existed.

No AI-specific regulation exists

The Privacy Commissioner has issued general guidance on privacy and AI, but there is no dedicated AI-specific regulation under the Privacy Act. You're interpreting the 13 Privacy Principles for training data, automated decisions, and cross-border data flows with limited regulatory direction.

Training data creates compliance gaps

Did you collect that training data for the purpose you're now using it? Is it accurate enough for automated decisions? Can individuals access or correct information your AI learned from? Most organisations can't answer these questions.

Individual rights get complicated

Someone requests access to their personal information. What do you disclose when an AI model learned patterns from their data but doesn't store it directly? How do you explain an automated decision when the model is a black box?

Our approach

We translate abstract Privacy Act principles into practical compliance requirements for AI systems. Not theoretical legal analysis, but operational procedures your teams can actually implement.

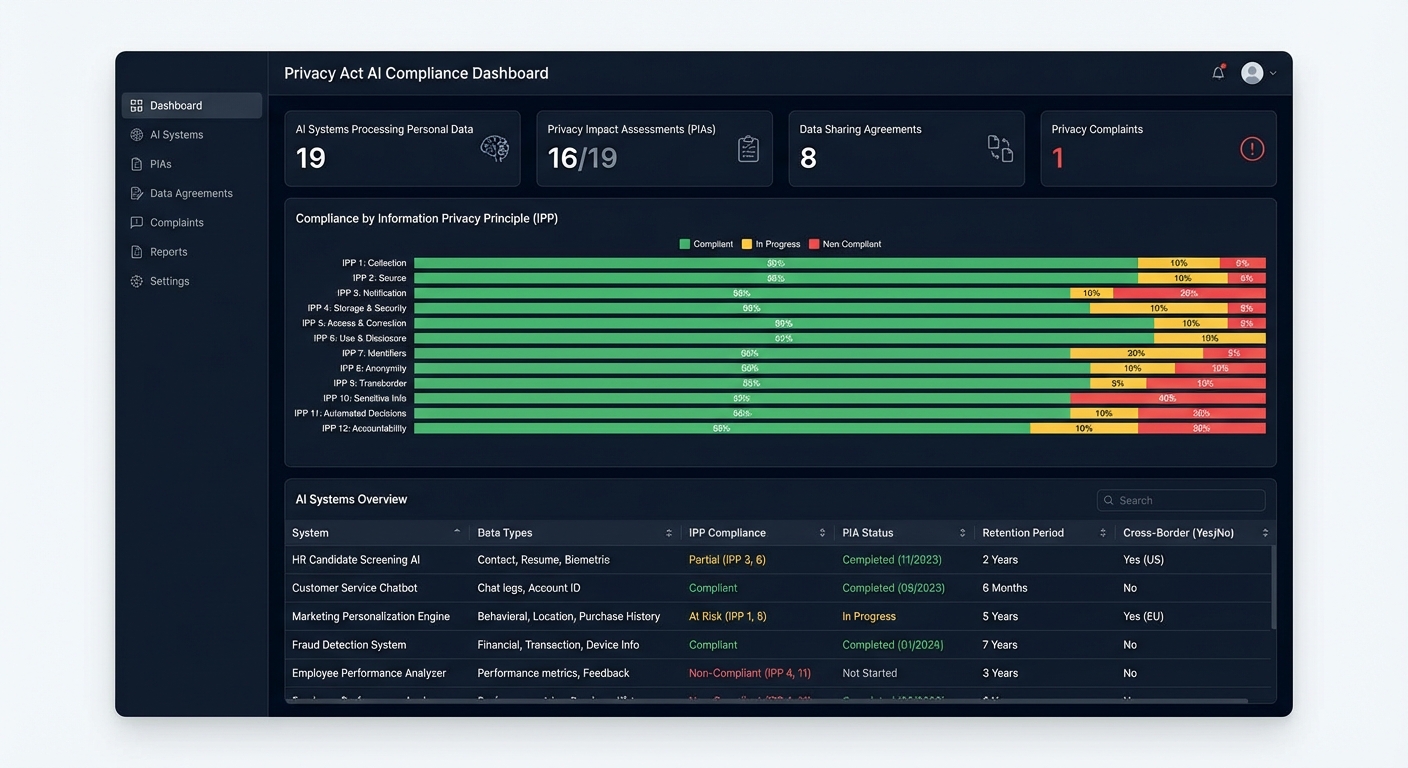

Audit your AI systems against the 13 Privacy Principles

We examine how your AI systems collect, store, use, and disclose personal information. We map each system to the 13 Privacy Principles and identify where you're exposed: training data collected without proper consent, automated decisions without explainability, cross-border data transfers to AI vendors without adequate safeguards.

Deliverable: Privacy compliance gap analysis, risk register

Conduct Privacy Impact Assessments for high-risk AI

For AI systems making significant automated decisions or processing sensitive information, we conduct formal Privacy Impact Assessments. We document what information you collect, why you need it, who can access it, how long you keep it, and what rights individuals have. This becomes your evidence of compliance if the Privacy Commissioner investigates.

Deliverable: Privacy Impact Assessment documentation

Build operational procedures for ongoing compliance

We create practical procedures for the messy realities of AI: consent mechanisms that explain AI use in plain language, processes for handling access requests when AI models are involved, data accuracy requirements for training datasets, and protocols for evaluating third-party AI vendors against Privacy Act requirements.

Deliverable: Data handling procedures, consent templates, vendor assessment frameworks

Train staff on Privacy Act requirements for AI

Your developers, product managers, and business users need to understand what the Privacy Act requires when they deploy AI. We train your teams on the principles that matter most: purpose limitation, accuracy, individual rights, and transparency.

Deliverable: Training materials, quick-reference guides

Key Privacy Principles for AI Systems

Here's how we interpret each Privacy Principle for AI contexts. This is the framework we use to assess your compliance.

Principle 1: Purpose of collection

AI training requires vast amounts of data, but you can only use personal information for the purpose you collected it. Scraped data, repurposed internal data, and third-party datasets often violate this principle.

Principle 10: Accuracy

Training data must be accurate enough for your AI's decisions. If your model makes hiring decisions based on outdated employment records or credit decisions based on incomplete financial data, you're breaching this principle.

Principle 11: Use and disclosure

Your AI can't use personal information beyond the original collection purpose. Using customer data to train a model you'll sell to other companies? That's a disclosure issue.

Principle 13: Openness

You must tell people when you use AI to process their information. "We use AI to improve our services" isn't specific enough. You need to explain what decisions the AI makes and how.

Using overseas AI vendors? You're still responsible for compliance

Most AI tools send data offshore - OpenAI, Google, Microsoft, AWS. The Privacy Act doesn't prohibit this, but Principle 12 requires you to take reasonable steps to prevent misuse. That means assessing vendors' privacy practices, negotiating data processing agreements, and understanding where your data actually goes.

We help you evaluate AI vendors against Privacy Act requirements and implement safeguards for cross-border transfers.

Who this is for

Financial services organisations

Using AI for credit decisions, fraud detection, or customer service? FMA and RBNZ expect you to manage privacy risks under existing obligations. Privacy Act compliance is foundational.

Healthcare providers

Health information gets extra protection under the Health Information Privacy Code. If your AI processes patient data, you need both Privacy Act and HIPC compliance.

Government agencies

Public sector AI must comply with Privacy Act 2020 and align with the Public Service AI Framework's transparency requirements. We help you meet both obligations.

HR and recruitment technology

Using AI to screen candidates, assess performance, or make employment decisions? You're processing sensitive personal information and making automated decisions that significantly affect individuals - the highest-risk scenario under privacy law.

Frequently asked questions

Does the Privacy Commissioner actually enforce these requirements for AI?

The Privacy Commissioner doesn't have AI-specific rules, but they enforce the Privacy Act 2020 for all data processing - including AI. Recent enforcement actions have focused on automated decision-making and data accuracy, both central to AI systems. Proactive compliance is cheaper than responding to a complaint.

What happens if someone requests access to personal information used to train our AI model?

You must provide access to their personal information, explain how you used it, and allow corrections if it's inaccurate. If your model learned patterns from their data but doesn't store it directly, you need procedures for explaining what happened. We help you develop protocols for these complex requests.

Can we use customer data to train AI models we'll sell or license to other companies?

Only if your original collection notice covered this use. Most organisations collected data for their own business purposes, not to train commercial AI products. Repurposing data requires either new consent or a careful legal analysis of whether the new use is reasonably related to the original purpose.

Do we need a Privacy Impact Assessment for every AI system?

Not necessarily. PIAs are required when privacy risks are significant. We help you assess which AI systems need formal PIAs and which can be addressed through lighter-touch privacy reviews.

Related services

AI Risk Assessment

Identify privacy risks alongside operational, ethical, and regulatory risks in a comprehensive AI risk assessment.

Learn more →Healthcare AI Governance

Health information requires extra protection under HIPC 2020. We help healthcare organisations comply with both Privacy Act and health-specific requirements.

Learn more →AI Governance Consulting

Privacy compliance is one component of comprehensive AI governance. Build a complete governance framework that addresses risk, compliance, ethics, and oversight.

Learn more →Ready to make your AI systems compliant with the Privacy Act 2020?

Schedule a privacy compliance review to understand where your AI systems are exposed and what procedures you need to implement.