Board-Level AI Governance for New Zealand Directors

Sections 131 to 138 of the Companies Act 1993 do not mention artificial intelligence. They do not need to. Your duty of care, diligence, and skill applies to every system your organisation deploys -- including the AI ones approved without board oversight.

76% of New Zealand leaders are prioritising AI agents. One in four says governance is the missing link. We close that gap for boards across Aotearoa.

Why NZ Boards Cannot Afford to Wait on AI Governance

New Zealand has no AI-specific legislation. That is not a comfort -- it is a trap. Without prescriptive rules, your existing director duties become the standard against which your AI oversight will be judged.

Personal Liability Under the Companies Act

Section 131 requires directors to act in good faith and in the best interests of the company. Section 137 demands the care, diligence, and skill of a reasonable director. When an AI system causes harm -- biased lending decisions, privacy breaches, flawed automated advice -- the question is not whether the board understood the technology. It is whether they took reasonable steps to govern it. Directors who cannot demonstrate informed oversight face personal exposure.

Voluntary Landscape Means Boards Set the Standard

New Zealand's AI governance environment is largely voluntary. The government's Algorithm Charter is opt-in. The Public Service AI Framework applies to agencies, not the private sector. There is no NZ equivalent of the EU AI Act. This means your board is not following a rulebook -- it is writing one. The organisations that establish rigorous governance now will define what "reasonable" looks like when regulators and courts inevitably assess director conduct.

Te Tiriti Obligations at Board Level

AI systems that process data about Maori communities, deliver services to Maori, or operate in sectors with Crown obligations raise questions that technology teams cannot answer alone. Data sovereignty, tino rangatiratanga over information, meaningful partnership in system design -- these are governance-level decisions. Boards that delegate Treaty considerations to IT departments are exposing both the organisation and themselves.

How We Deliver Board-Level AI Governance for NZ

Four integrated workstreams designed for the New Zealand governance context. Not a framework borrowed from another jurisdiction -- built for the Companies Act, the Privacy Act, and Te Tiriti.

Director Liability Briefings

Most directors understand their general duties. Few have considered how those duties apply when management deploys machine learning models, generative AI tools, or autonomous decision-making systems. We run structured briefings that translate sections 131 through 138 of the Companies Act into concrete AI governance expectations -- what directors must ask, what documentation to require, and where personal exposure sits.

- Companies Act duty mapping to AI risk categories

- Personal liability scenarios and case analysis

- D&O insurance gap assessment for AI-related claims

- Director question frameworks for management reporting

NZ Regulatory Landscape Education

The FMA expects conduct obligations to extend to AI in financial services. The RBNZ expects prudential risk oversight to encompass technology systems. The Privacy Commissioner has signalled algorithmic decision-making as a priority. These expectations exist today, even without AI-specific legislation. We bring boards up to speed on what each regulator expects and where their organisation sits.

- FMA conduct expectations for AI-enabled services

- RBNZ prudential risk expectations mapping

- Privacy Act 2020 automated decision-making obligations

- Algorithm Charter and Public Service AI Framework briefing

Treaty-Informed Governance Design

Te Tiriti o Waitangi creates obligations that cannot be addressed by a privacy impact assessment or a standard risk register. When AI systems collect, process, or make decisions using data relating to Maori, boards need governance structures that reflect partnership, protection, and participation. We help boards integrate these obligations into their AI governance without treating them as an afterthought or a compliance checkbox.

- Maori data sovereignty assessment for AI systems

- Board reporting on Treaty compliance in AI deployments

- Consultation frameworks for AI affecting Maori communities

- Alignment with Te Mana Raraunga principles

Governance Structure and Charter Development

Knowing the risks is only valuable if it changes how your board operates. We design practical governance structures -- committee mandates, escalation thresholds, reporting cadences, and decision authorities -- that give directors genuine oversight without requiring them to become technologists. Every structure is tailored to your organisation's size, sector, and AI maturity.

- Board AI governance charter with NZ-specific provisions

- Committee mandate design or expansion recommendations

- AI risk escalation and decision authority matrix

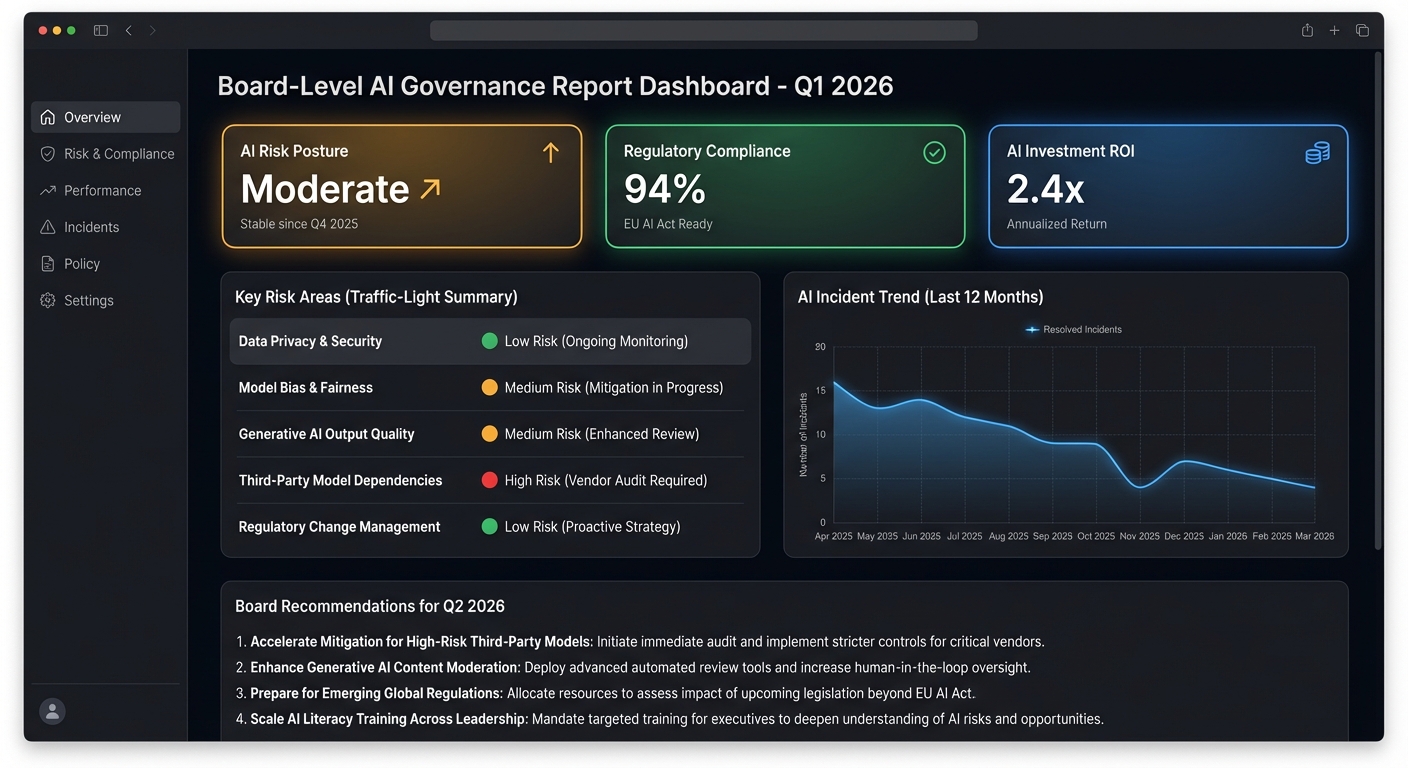

- Board-ready AI reporting templates and dashboards

What Your Board Receives

Tangible outputs that change how your board governs AI -- not a slide deck that gathers dust after the strategy offsite

Board AI Governance Charter

A formal charter defining the board's AI oversight role, delegated authorities, and reporting requirements -- drafted for your constitution and committee structure.

Director Liability Briefing Pack

A confidential reference document mapping your organisation's AI footprint against Companies Act duties, with specific liability scenarios and mitigation actions.

Treaty Compliance Board Report

Assessment of how your AI systems interact with Maori data and communities, with board-level recommendations aligned to Te Tiriti obligations and data sovereignty principles.

FMA/RBNZ Readiness Assessment

Gap analysis of your AI governance against current FMA conduct expectations and RBNZ prudential risk standards, with a prioritised remediation roadmap.

AI Risk Register for Directors

A board-level risk register categorising every AI system by risk tier, with oversight requirements and escalation triggers appropriate for director consumption.

Quarterly Regulatory Briefings

Ongoing updates on NZ regulatory developments, Privacy Commissioner guidance, and international AI governance trends relevant to your sector and obligations.

Board Question Framework

A structured set of questions directors should ask management about AI deployments -- organised by risk category, designed to demonstrate informed oversight.

Annual Governance Review

Yearly assessment of your AI governance maturity against evolving NZ expectations, with recommendations for the coming year's governance programme.

Boards We Work With

Different organisations face different AI governance pressures. We tailor our approach to your regulatory exposure, organisational scale, and AI maturity.

NZX-Listed and Large Private Companies

For boards navigating the NZX Corporate Governance Code alongside AI adoption. Whether you are deploying AI in customer-facing products or internal operations, your directors need a governance posture that satisfies both the Code's principles and your Companies Act duties. We build governance structures that scale with your AI ambitions.

Crown Entities and Government Organisations

Public sector boards face additional layers: the Public Service AI Framework, Cabinet expectations on algorithmic transparency, and Treaty obligations that are non-negotiable rather than aspirational. We help Crown entity boards meet these obligations while enabling the AI-driven efficiency gains government agencies are expected to deliver.

FMA and RBNZ Regulated Entities

Financial services boards face the most immediate regulatory pressure. The FMA's conduct expectations and the RBNZ's prudential focus increasingly encompass AI and algorithmic systems. If your organisation uses AI for credit decisions, claims processing, or customer advice, board-level governance is not optional -- it is what regulators will ask to see.

Organisations with Maori Community Impact

If your AI systems collect, analyse, or make decisions using data about Maori communities, your board has governance obligations that go beyond the Privacy Act. We work with boards to integrate Te Tiriti principles into AI governance in a way that is substantive, not performative -- ensuring partnership and data sovereignty are reflected in how AI is overseen.

Common Questions From NZ Directors

How does the Companies Act 1993 create personal liability for AI governance failures?

Sections 131 through 138 impose duties on directors to act in good faith, with care, diligence, and skill, and to avoid reckless trading. These duties are technology-agnostic -- they apply to every material risk your organisation faces, including AI. If an AI system causes financial loss, privacy breaches, or discriminatory outcomes, and the board did not exercise reasonable oversight, individual directors may be held personally liable. The standard is what a reasonable director in the same circumstances would have done. As AI governance becomes mainstream, the threshold for "reasonable" rises.

How do Te Tiriti obligations apply at board level for AI?

Te Tiriti o Waitangi creates obligations of partnership, protection, and participation. For AI governance, this means boards must consider who holds rangatiratanga over data collected from or about Maori, whether AI systems perpetuate existing inequities affecting Maori communities, and whether meaningful consultation has occurred before deploying AI in areas with Maori impact. These are not operational decisions that can be delegated to project teams. They require board-level attention, and for Crown entities they are legally grounded obligations rather than voluntary best practice.

What does the FMA expect from boards regarding AI governance?

The FMA has not issued AI-specific regulations, but its conduct expectations already encompass technology systems that affect customers. If your organisation uses AI in financial advice, credit assessment, insurance underwriting, or customer interactions, the FMA expects the board to have oversight of those systems' fairness, transparency, and consumer outcomes. We help boards understand where their AI deployments intersect with FMA expectations and build governance that demonstrates proactive oversight.

Should our board create a dedicated AI committee or expand an existing one?

There is no single right answer. For organisations where AI is transformative to the business model, a dedicated technology and AI committee may be warranted. For most NZ organisations, expanding the mandate of the risk committee or audit and risk committee is more practical. What matters is that AI governance has a clear home within your board structure, with defined escalation paths and reporting cadences. We assess your current committee workload, AI maturity, and strategic direction to recommend the structure that will actually function, not just look good in an annual report.

NZ AI governance is mostly voluntary. Why invest now?

Precisely because it is voluntary. When New Zealand does not prescribe specific AI governance requirements, courts and regulators will look at what comparable organisations were doing to determine the standard of care. Boards that establish governance early are not just protecting their organisations -- they are shaping the benchmark. Furthermore, the FMA, RBNZ, and Privacy Commissioner are all signalling increased scrutiny of AI. Organisations that wait for mandatory requirements will be building governance under pressure and public scrutiny, rather than ahead of it.

Your Board Approved AI Adoption. Now It Needs Board-Level AI Governance.

A 90-minute director briefing is where most boards start. We walk through your Companies Act obligations, your current AI exposure, and the governance gaps that create personal liability. No sales pitch -- just the information directors need to make informed decisions about AI oversight.

Complimentary 90-minute briefing for boards considering AI governance engagement