AI Impact Assessment for New Zealand Organisations

New Zealand has no mandated AI impact assessment regime. But the Privacy Act 2020, the Companies Act, and the Code of Health and Disability Services Consumers' Rights all create liability for AI-driven decisions. The gap between "no specific AI law" and "no legal exposure" is where organisations get into trouble.

We conduct AI impact assessments designed for the New Zealand context -- including cultural safety for Maori and Pacific populations, Treaty of Waitangi compliance, and population-appropriate methodologies that international frameworks consistently miss.

International Frameworks Were Not Built for Aotearoa

Most AI risk frameworks originate from the EU, UK, or North America. They assume different population structures, different legal traditions, and different cultural obligations. Applying them directly to New Zealand creates blind spots.

What off-the-shelf AI assessments miss in New Zealand:

- Treaty of Waitangi obligations -- AI impacts on Maori communities require specific assessment that no international framework addresses

- Cultural safety for Maori and Pacific populations -- bias testing calibrated for US or European demographics produces misleading results here

- Health Information Privacy Code 2020 -- healthcare AI needs assessment against NZ-specific information privacy rules, not generic HIPAA analogies

- Companies Act director liability -- directors face personal exposure for AI harms under NZ law, a risk profile that differs from other jurisdictions

Waitemata Healthcare discovered this when international AI frameworks proved inappropriate for their context. They are not unique. Any organisation serving New Zealand's population mix needs assessment methodologies built for that population.

No Mandate, Real Liability

New Zealand does not mandate AI impact assessments. But the Privacy Act 2020, Consumer Guarantees Act, and Companies Act all apply to AI-driven decisions. Directors cannot claim ignorance of AI harms as a defence. The absence of specific AI regulation does not mean the absence of legal consequences.

Cultural Safety Gaps

AI systems trained on international datasets often produce discriminatory outcomes for Maori and Pacific populations. Healthcare triage algorithms, credit scoring models, and recruitment tools all carry this risk. Standard fairness metrics do not account for the specific equity obligations New Zealand organisations hold.

Unanswered Questions

What happens to personal data if your AI vendor is acquired or goes bankrupt? Who is accountable when an AI system fails? Are conflicts of interest between your AI provider and your organisation documented? Who monitors AI performance after deployment? These are the questions our assessments answer.

AI Impact Assessment Methodology Built for the New Zealand Context

Our approach starts from the premise that AI operating in Aotearoa must be assessed against Aotearoa's legal landscape, population needs, and cultural obligations. We do not adapt international templates. We built our NZ methodology from the ground up.

Cultural Impact Assessment

We evaluate how AI systems affect Maori and Pacific communities specifically. This includes testing for disparate outcomes across NZ ethnic groups, assessing whether Maori data sovereignty principles are upheld, and reviewing whether AI decision-making respects the partnership, protection, and participation principles of Te Tiriti.

NZ Legal Exposure Mapping

We map every AI system against the Privacy Act 2020, the Health Information Privacy Code 2020, the Code of Health and Disability Services Consumers' Rights, the Consumer Guarantees Act, and Companies Act director duties. You receive a clear picture of where liability sits and who holds it.

Population-Appropriate Methods

Fairness testing must reflect the population the AI serves. We use NZ-specific demographic benchmarks, test against NZ ethnic categories, and assess outcomes for population groups that international tools ignore entirely. An assessment calibrated for London or San Francisco is not calibrated for Auckland or Christchurch.

What Our AI Impact Assessment Covers

Treaty of Waitangi Impact Review

We assess how AI systems affect Maori communities, whether data governance upholds Maori data sovereignty, and whether decision-making processes align with Treaty principles. This includes evaluating consultation mechanisms, representation in training data, and equitable outcomes across iwi and hapu.

Privacy Act 2020 and HIPC Compliance

AI systems that process personal information must comply with the Privacy Act's information privacy principles. Healthcare AI faces additional obligations under the Health Information Privacy Code 2020. We test whether your AI systems meet both, including cross-border data transfer restrictions that affect cloud-based AI services.

Cultural Safety Analysis

We test AI outputs for disparate impact on Maori and Pacific populations using NZ-appropriate benchmarks. Healthcare AI receives particular scrutiny against the Code of Health and Disability Services Consumers' Rights, which guarantees freedom from discrimination and the right to services that account for cultural needs.

Director Liability Assessment

Under the Companies Act, directors must exercise reasonable care, diligence, and skill. If an AI system causes harm and the board had no visibility over its risks, directors face personal liability. We identify which AI risks create director exposure and recommend governance structures to manage that exposure.

Vendor and Supply Chain Risk

What happens to your data if your AI vendor is sold? Where does your intellectual property sit? Are there conflicts of interest between your AI provider's commercial goals and your organisation's obligations? We assess third-party AI arrangements for contractual gaps, data protection risks, and ongoing monitoring responsibilities.

Ongoing Accountability Structure

An impact assessment is a point-in-time exercise. AI systems change. We evaluate whether your organisation has the monitoring, escalation, and review mechanisms to maintain accountability after our assessment is complete. Who is responsible when AI performance degrades? Our report answers that question.

Our Assessment Process

Scoping and Context Mapping (1-2 weeks)

We identify every AI system in scope, map the populations they affect, and document the legal and cultural obligations that apply. This includes stakeholder interviews across leadership, operations, and -- where relevant -- iwi or community liaison. We define which NZ-specific standards apply to each system.

Technical and Cultural Assessment (2-6 weeks)

Parallel workstreams assess technical performance and cultural safety. We conduct bias testing using NZ demographic benchmarks, evaluate Privacy Act and HIPC compliance, review Treaty impact, and test third-party vendor arrangements for contractual and data protection gaps. Evidence collection follows professional audit standards.

Findings and Liability Mapping (1-2 weeks)

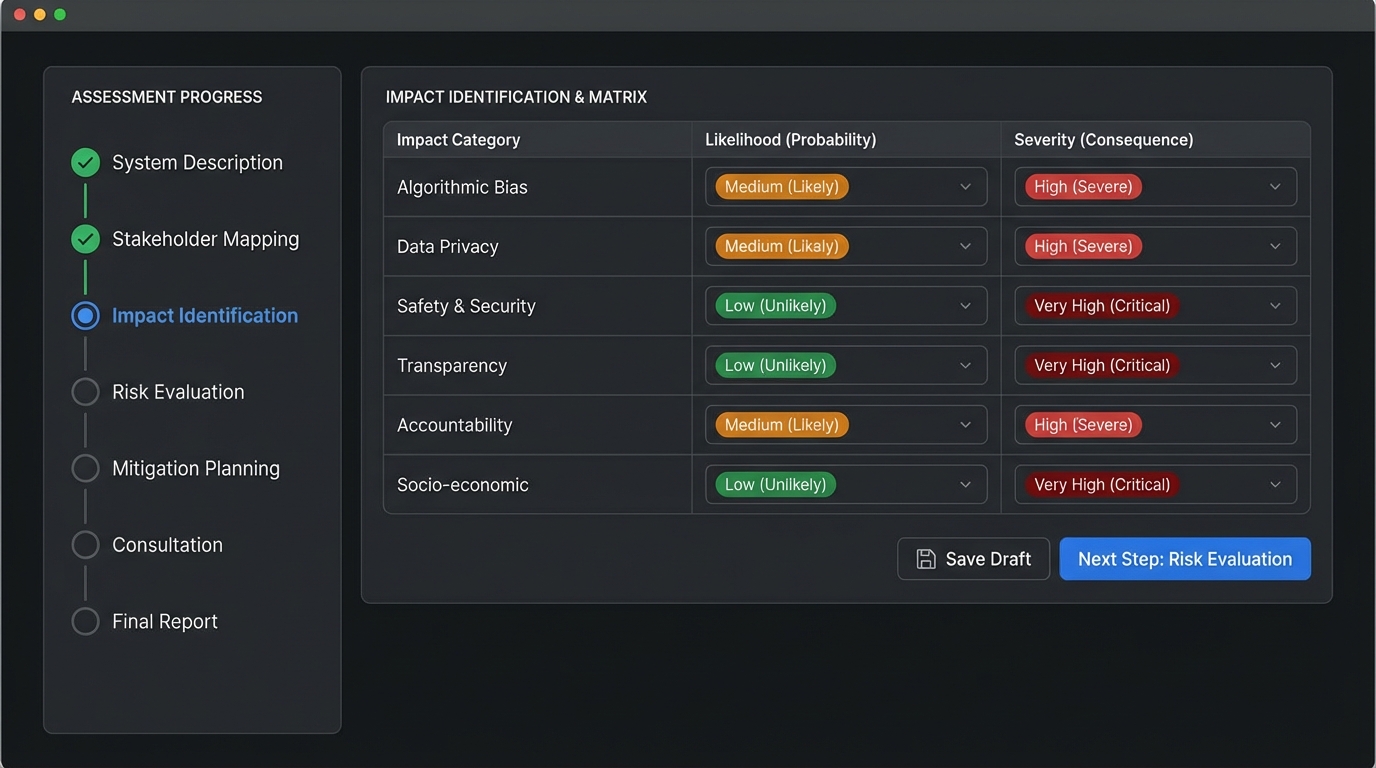

We consolidate findings into a structured report that maps each risk to its legal basis -- Privacy Act provision, Companies Act duty, Treaty principle, or consumer rights obligation. Risks are rated by severity and likelihood. Recommendations include specific remediation steps with accountability owners.

Governance Implementation Support (1-2 weeks)

We present findings to boards and leadership teams, establish ongoing monitoring frameworks, and transfer the knowledge your organisation needs to maintain AI accountability independently. This includes templates for ongoing cultural safety review and Treaty compliance monitoring.

What You Receive

Cultural Safety Assessment

- Disparate impact analysis across NZ ethnic populations

- Maori and Pacific population outcome testing

- Recommendations for culturally appropriate AI governance

Treaty Impact Review

- Assessment against partnership, protection, and participation principles

- Maori data sovereignty compliance evaluation

- Remediation plan for identified Treaty compliance gaps

Legal Liability and Compliance Report

- Privacy Act 2020 and HIPC compliance analysis per AI system

- Companies Act director duty risk assessment

- Vendor contract gap analysis and data protection review

Ongoing Monitoring Framework

- AI performance monitoring templates and escalation paths

- Cultural safety review cycle documentation

- Accountability assignment for each AI system and risk

Frequently Asked Questions

If there is no mandatory AI assessment in NZ, why should we do one?

Because the liability exists even without a specific AI law. The Privacy Act 2020 applies to AI systems that process personal information. The Companies Act creates director duties that extend to AI governance. The Code of Health and Disability Services Consumers' Rights applies to AI in healthcare. Conducting an assessment proactively is significantly cheaper than responding to a Privacy Commissioner investigation or defending a director liability claim.

How is a cultural safety assessment different from standard bias testing?

Standard bias testing typically checks for disparate outcomes across broad demographic categories using international benchmarks. Cultural safety assessment goes further: it tests against NZ-specific population groups, evaluates outcomes against Treaty principles, assesses whether Maori data sovereignty is respected, and examines whether AI decision-making accounts for the cultural context of the communities it affects. The distinction matters because an AI system can pass generic bias testing while still producing inequitable outcomes for Maori and Pacific populations.

What if our AI vendor is based overseas?

This creates additional assessment considerations. The Privacy Act 2020 restricts cross-border data transfers. We evaluate vendor contracts for data protection adequacy, assess what happens to your data if the vendor is acquired or ceases operations, identify conflicts of interest, and determine whether your organisation retains meaningful control over AI systems hosted or managed offshore.

Do directors really face personal liability for AI harms?

Under the Companies Act, directors must act with reasonable care, diligence, and skill. If a board has no visibility over AI systems operating in the organisation -- no risk register, no impact assessment, no monitoring framework -- and those systems cause harm, the lack of governance itself becomes the liability exposure. Our assessment establishes the governance baseline directors need.

How long does a NZ-context assessment take?

Typically 4 to 10 weeks depending on scope. A focused assessment of a single high-risk AI system (such as a healthcare triage tool) takes 4 to 6 weeks. A comprehensive assessment across an organisation's full AI portfolio, including cultural safety analysis and vendor reviews, takes 8 to 10 weeks. We scope based on your risk profile, the populations your AI systems serve, and which legal obligations apply.

What ongoing responsibilities remain after the assessment?

AI systems are not static. Models drift, vendor arrangements change, and populations evolve. Our assessment includes an ongoing monitoring framework that defines who is responsible for continued oversight, what triggers a reassessment, and how cultural safety is maintained over time. We build the capability for your organisation to sustain accountability without permanent reliance on external assessors.

Related Services

AI Governance Consulting

Design governance frameworks that reflect NZ legal requirements and Treaty obligations from the outset.

Learn more →Third-Party AI Risk Management

Evaluate vendor arrangements, offshore data risks, and contractual gaps in your AI supply chain.

Learn more →Regulatory Compliance

Navigate the Privacy Act 2020, HIPC, Companies Act director duties, and consumer rights obligations for AI systems.

Learn more →Request an AI Impact Assessment for Your Organisation

An independent assessment built for the New Zealand context gives you clarity on legal exposure, cultural safety, and governance gaps -- before they become complaints, investigations, or front-page stories.

No-obligation initial consultation | Fixed-price engagements | NZ-context methodology