AI Governance Consulting for New Zealand Organisations

81% of New Zealanders want AI regulation. Only 6% know what rules exist today. That 75-point awareness gap is where governance risk lives -- and where we help you build the structures that turn ambiguity into advantage.

We build governance programmes grounded in te ao Maori, aligned to the Public Service AI Framework and OECD Principles, and designed to satisfy FMA, RBNZ, and the Office of the Privacy Commissioner before mandatory requirements arrive.

Three governance gaps holding NZ organisations back

New Zealand was the last OECD country to publish a national AI strategy. That delay created a vacuum -- and organisations are filling it with guesswork, borrowed Australian frameworks, or nothing at all.

The 75% awareness gap

Most New Zealanders -- and most boards -- cannot name a single AI regulation that applies to them. The Privacy Act 2020, Fair Trading Act, and Companies Act already impose obligations on AI use. Organisations that do not realise this are already exposed.

Treaty obligations without a playbook

How does your AI system handle Maori data? Does your algorithmic decision-making respect tino rangatiratanga? Kaitiakitanga demands guardianship of data, not just compliance with it. Standard governance frameworks from overseas ignore these obligations entirely.

Voluntary does not mean optional

New Zealand's light-touch regulatory approach means governance is technically voluntary. But the FMA expects financial services firms to manage AI risk. The Privacy Commissioner enforces the 13 Information Privacy Principles. And 25% of NZ leaders say governance is the "missing link" in their AI strategy. Voluntary today becomes mandatory tomorrow.

of NZ leaders are prioritising AI agents in their organisations

potential GDP contribution from generative AI by 2038

of NZ leaders say governance is the "missing link" in their AI strategy

A governance programme built for how NZ actually works

We do not import governance templates from other jurisdictions and swap the regulator names. Every programme we design starts from the ground up: your regulatory context, your Treaty obligations, your organisational culture, your risk appetite.

Map the landscape

We audit every AI system, algorithm, and automated decision in your organisation. We identify who built it, who approved it, what data it touches, and whether it affects Maori communities or data. This is not a questionnaire. It is a forensic inventory that gives your board its first complete picture of AI exposure.

Embed cultural governance

We integrate Maori data sovereignty principles and Treaty of Waitangi obligations into the governance structure from the start -- not as a bolt-on appendix. This includes data classification aligned to iwi expectations, tikanga-informed impact assessments, and kaitiakitanga-based stewardship models for data that relates to tangata whenua.

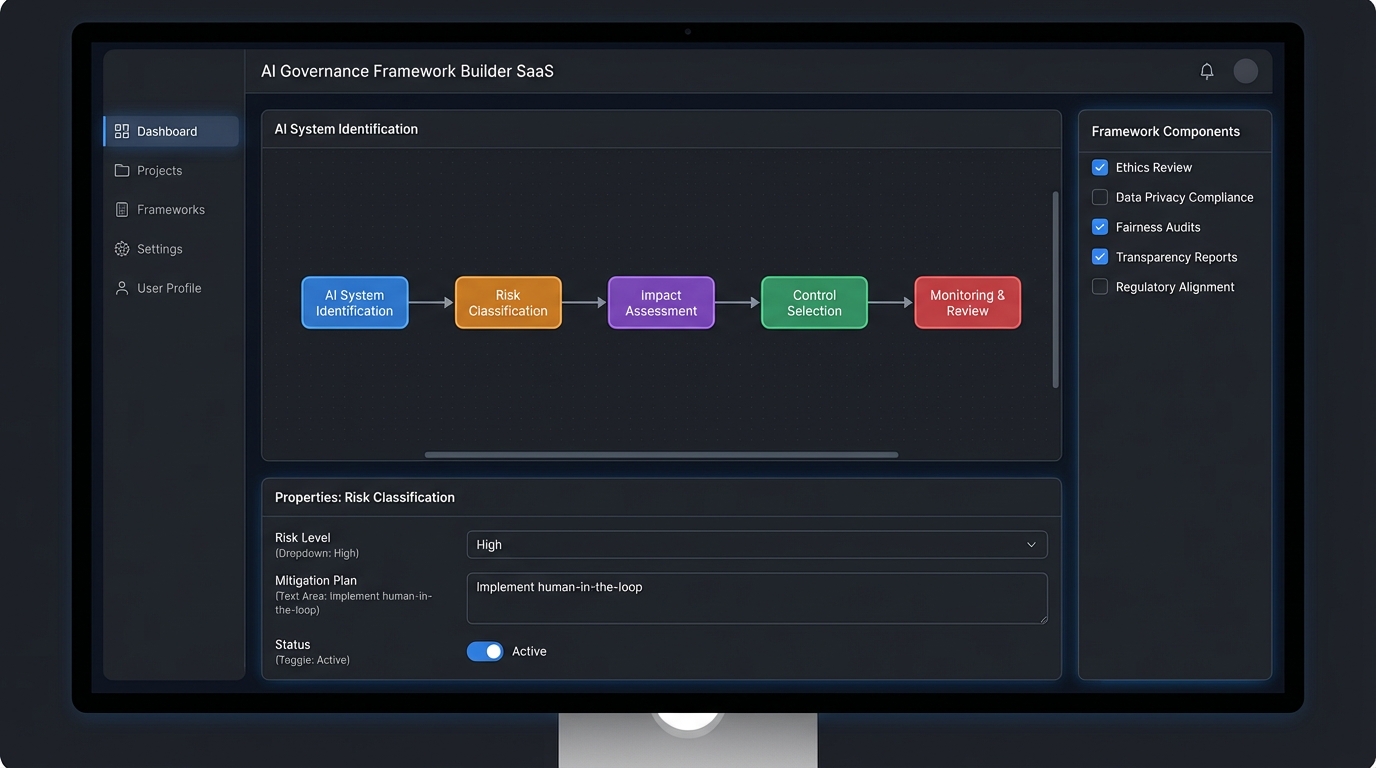

Design the operating model

We build clear accountability structures: who owns AI risk, who signs off on new deployments, who monitors ongoing performance, and who reports to governance committees. We align these to the Public Service AI Framework for government agencies and to FMA/RBNZ expectations for financial services organisations.

Write the policies that matter

We draft the policies your organisation needs -- not the ones a generic template vendor sells. Every policy maps to specific NZ legal obligations: the 13 Information Privacy Principles, Fair Trading Act requirements for AI-generated content, and Companies Act director duties around emerging technology risk.

Activate and sustain

A framework that nobody uses is worse than no framework at all. We train your teams, run tabletop exercises, establish reporting rhythms, and build internal capability so governance becomes part of how your organisation operates -- not a document that lives in SharePoint.

What your organisation walks away with

Every deliverable is written for New Zealand. Not adapted from an overseas template. Not a theoretical document. Something your teams will use on Monday morning.

NZ-Specific Governance Framework

- Governance committee structure with terms of reference

- Accountability matrix mapping AI ownership across the organisation

- Treaty of Waitangi compliance mapping for AI systems

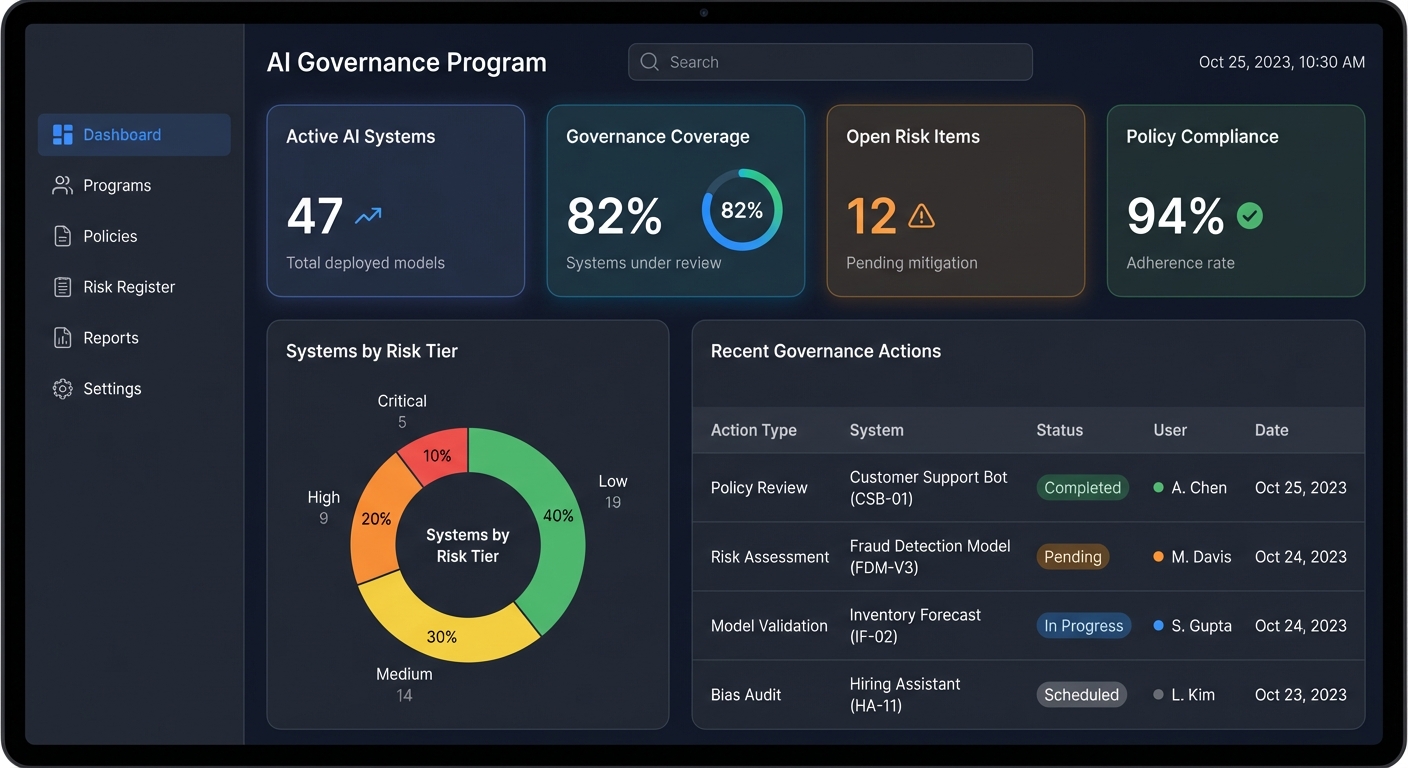

- Board-level AI risk reporting templates and dashboards

Policy Suite Aligned to NZ Law

- Responsible AI Use Policy mapped to the 13 Privacy Principles

- AI Risk Classification and Triage Policy

- Maori Data Governance and Sovereignty Policy

- AI Vendor and Third-Party Due Diligence Policy

Regulatory Readiness Package

- Privacy Act 2020 compliance assessment for all AI systems

- Public Service AI Framework alignment report (government agencies)

- FMA/RBNZ expectations mapping for financial services

- OECD AI Principles alignment and gap analysis

Activation and Capability Building

- 12-month phased implementation roadmap with milestones

- Governance team training programme and workshop materials

- Tabletop exercise scenarios for AI incident response

- Governance maturity scorecard with quarterly benchmarks

Designed for organisations navigating NZ's unique AI landscape

Every sector in Aotearoa faces different AI governance pressures. We tailor our approach to match yours.

Government agencies and public service

Central and local government bodies -- from Te Whatu Ora to Auckland Council -- implementing the Public Service AI Framework and managing AI procurement under Cabinet expectations.

Financial services under FMA and RBNZ oversight

Banks like ANZ NZ, BNZ, ASB, Westpac NZ, and Kiwibank deploying AI for credit decisions, fraud detection, and customer service -- where the FMA and RBNZ expect governance proportionate to risk.

Organisations handling Maori data

Any organisation -- public or private -- that collects, processes, or makes decisions using data relating to Maori communities, iwi, hapu, or whanau. Treaty obligations demand governance that goes beyond standard privacy compliance.

Organisations preparing for mandatory regulation

Forward-thinking NZ organisations that recognise voluntary frameworks are a stepping stone, not a destination. Build governance now and avoid the scramble when rules harden.

Questions NZ organisations ask us

NZ has no mandatory AI laws. Why invest in governance now?

Because existing laws already apply. The Privacy Act 2020 regulates how AI systems collect and use personal information. The Fair Trading Act 1986 prohibits misleading conduct -- including by AI. The Companies Act 1993 requires directors to act with reasonable care, which increasingly includes oversight of AI risk. The National AI Strategy signals that regulation will tighten. Organisations that build governance now will be ready. Those that wait will be scrambling.

How do you integrate Treaty of Waitangi obligations into an AI governance framework?

We start with the principle of kaitiakitanga -- guardianship. We classify data that relates to Maori communities, iwi, hapu, or whanau and build specific governance controls around it: who can access it, how AI systems can use it, what consultation is required before automated decisions affect tangata whenua, and how data sovereignty principles are enforced through your technology stack. This is not a section in an appendix. It is woven through every policy and process.

Our agency needs to implement the Public Service AI Framework. Where do we start?

The Public Service AI Framework (February 2025) sets expectations for how government agencies procure, deploy, and govern AI. We map your current AI systems against the Framework's requirements, identify gaps, and build an implementation plan that satisfies Cabinet expectations. For most agencies, the starting point is an AI inventory -- you cannot govern what you cannot see.

What does the FMA expect from financial services firms using AI?

The FMA has not published prescriptive AI rules, but it expects licensed entities to manage material risks -- and AI is increasingly material. Under the CoFI Act 2022, fair conduct obligations extend to how you use AI in customer-facing decisions. The RBNZ expects operational resilience that includes technology risk. Our frameworks map directly to these expectations so you can demonstrate due diligence when regulators ask.

Can we use this framework to align with international standards like ISO 42001?

Yes. We design governance programmes that satisfy NZ requirements while maintaining alignment with ISO 42001, OECD AI Principles, and the NIST AI Risk Management Framework. If your organisation operates across borders or wants international certification, the governance programme we build will serve as the foundation.

How long does this take and what does the engagement look like?

A typical governance programme runs 10-14 weeks across five phases: landscape mapping, cultural governance design, operating model development, policy drafting, and activation. Government agencies often run phased engagements aligned to procurement cycles and budget approvals. We tailor the timeline to your organisation's pace and capacity.

Explore related NZ services

Privacy Act 2020 Compliance for AI

Map your AI systems against all 13 Information Privacy Principles. Address automated decision-making, cross-border data transfers, and Privacy Commissioner expectations.

Learn more →Public Service AI Framework Alignment

Purpose-built guidance for government agencies implementing the February 2025 Framework, including procurement evaluation, risk tiering, and Cabinet reporting obligations.

Learn more →Maori Data Governance

Implement data sovereignty principles, tikanga-informed governance structures, and Treaty-aligned policies for AI systems that process or affect Maori data.

Learn more →Start Your AI Governance Consulting Engagement

The organisations that build AI governance now -- before mandatory rules arrive -- will move faster, face less disruption, and earn more trust. Start with a conversation about where your organisation stands today.