AI Audit and Assessment for New Zealand Organisations

New Zealand has no prescribed AI audit requirements. That means when something goes wrong, there is no checklist to hide behind -- only the question of whether your organisation did enough.

Our independent AI audit maps every gap between what your AI systems actually do and what the Privacy Act 2020, FMA guidance, Treaty obligations, and international best practice demand.

The Voluntary Regulation Trap

New Zealand's light-touch approach to AI sounds like freedom. In practice, it shifts the entire burden of getting it right onto your organisation.

No Rules Does Not Mean No Risk

81% of New Zealanders want AI regulation, yet only 6% know what actually exists. The gap between public expectation and current law creates reputational and legal exposure that most organisations have not quantified.

Regulators Are Already Watching

The FMA surveyed AI use across banking, insurance, asset management, and financial advice in 2024. 9 out of 10 financial firms are already using AI. The RBNZ expects entities to manage AI risks under existing obligations. Voluntary does not mean invisible.

Treaty Obligations Demand More

AI systems that process data about Maori communities carry responsibilities that international frameworks were not designed to address. Waitemata Healthcare found global AI standards inappropriate for the NZ context. Te Tiriti compliance requires purpose-built assessment.

You Set the Standard. We Measure Against It.

Without AI-specific legislation, NZ organisations must apply existing laws to new technology. The Privacy Act 2020, Fair Trading Act 1986, Companies Act 1993, and CoFI Act 2022 all have implications for AI -- but none spell out exactly what "compliant" looks like.

That ambiguity is precisely why an independent audit matters. We map your AI systems against every applicable obligation, flag what falls short, and give you a defensible position when regulators come asking.

Privacy Act 2020: Principle-by-principle review across all 13 Information Privacy Principles as they apply to your AI systems

FMA and RBNZ expectations: Assessment against the standards regulators apply when reviewing AI use in regulated sectors

Public Service AI Framework: Alignment check against the government's February 2025 framework and OECD AI Principles

Te Tiriti and Maori data sovereignty: Cultural impact assessment grounded in kaitiakitanga and te ao Maori principles

How the Audit Works

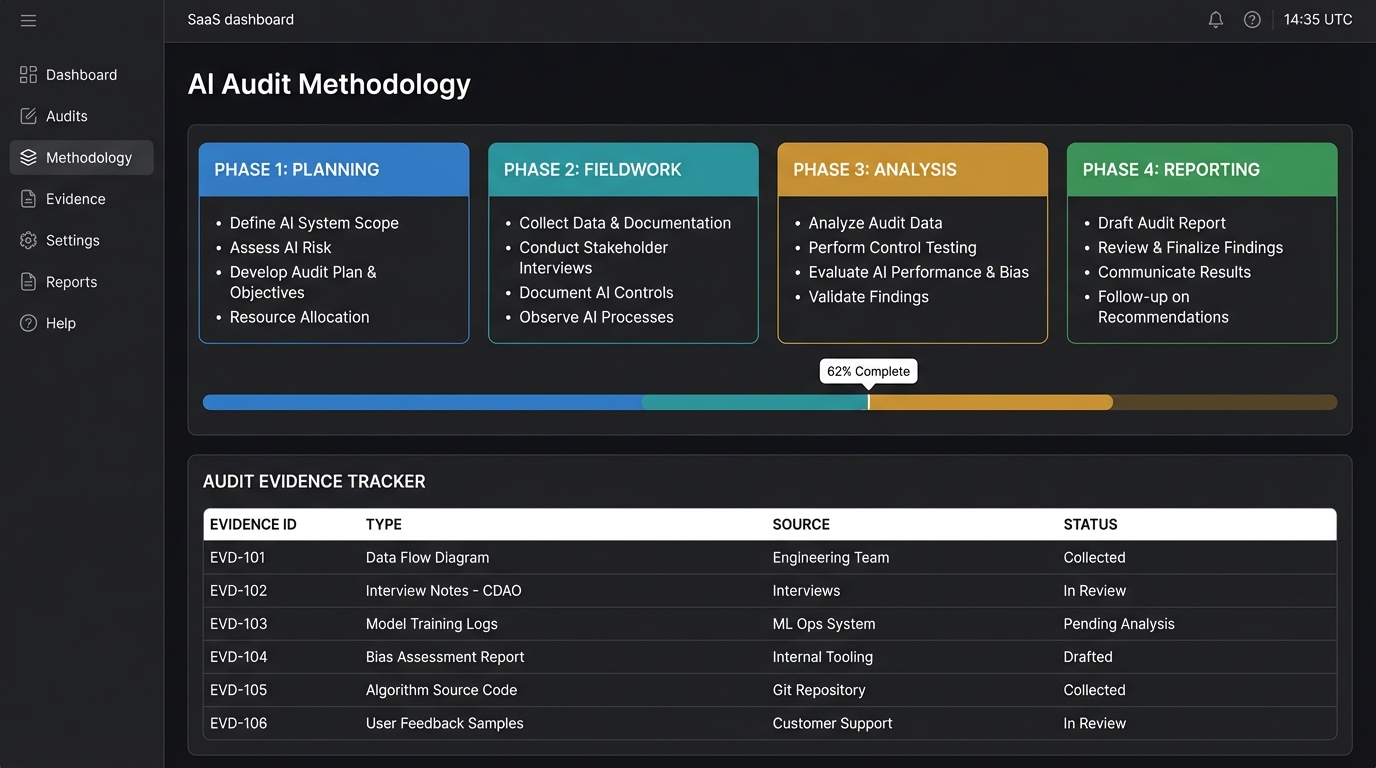

Our process is designed for the NZ regulatory context: principles-based, culturally informed, and built to produce findings that hold weight with the FMA, Privacy Commissioner, and your board alike.

Benchmarked Against

Discovery and Mapping

We catalogue every AI system in your organisation -- including tools teams adopted without formal approval. Shadow AI is where most blind spots live. We map each system to the data it touches, the decisions it influences, and the people it affects.

Obligation Analysis

We walk through each applicable obligation: the 13 Privacy Principles, Fair Trading Act consumer protection provisions, Companies Act director duties, CoFI Act fair conduct requirements, and Treaty responsibilities. For each one, we assess whether your current practices satisfy it.

Cultural Impact Review

We assess how your AI systems interact with Maori data, communities, and cultural values. This includes evaluating data sovereignty practices, consent mechanisms, and whether algorithmic outputs risk disproportionate impact on Maori and Pacific peoples.

Controls and Evidence Testing

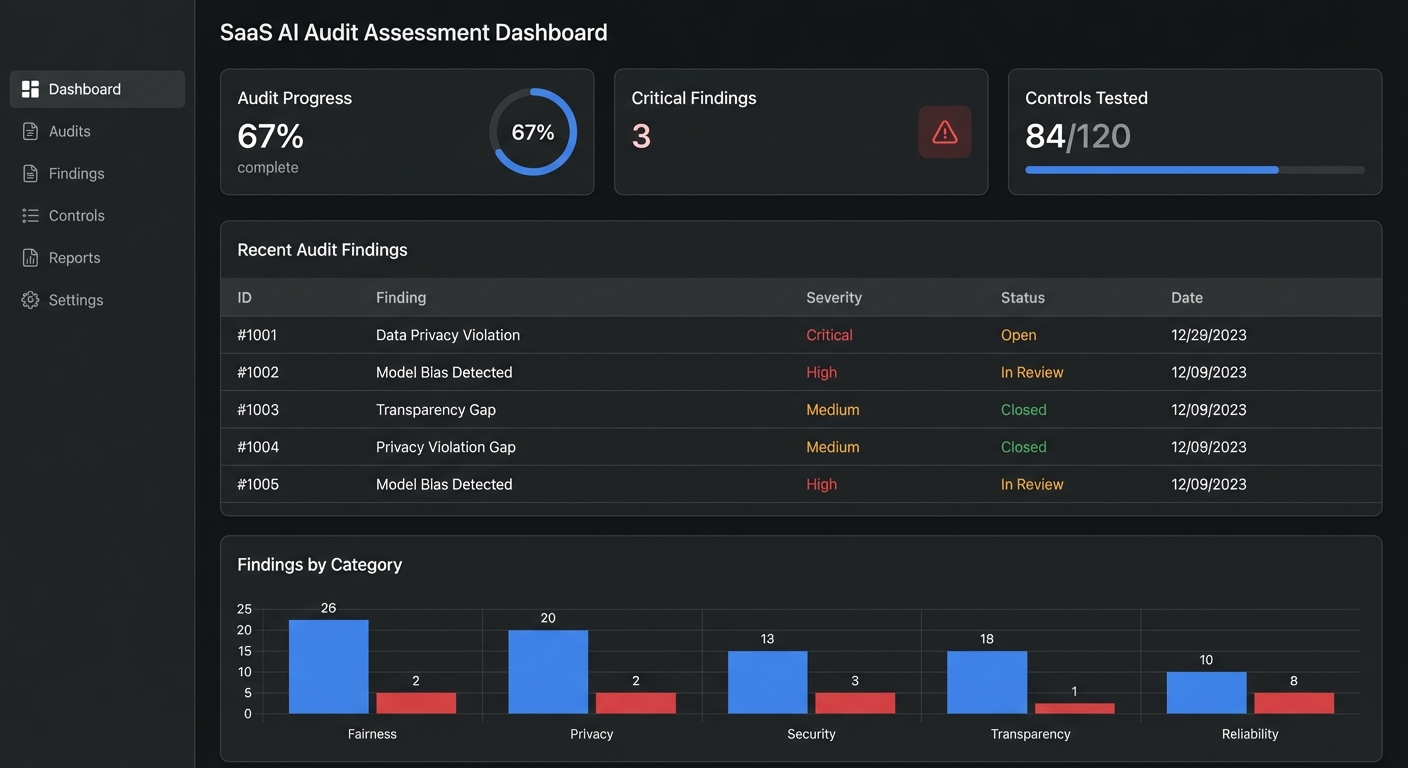

We verify that governance controls work in practice, not just on paper. Approval processes, human oversight mechanisms, monitoring dashboards, incident escalation paths -- we test whether they function when it matters.

Gap Scoring and Prioritisation

Every finding gets a severity score based on regulatory exposure, reputational risk, and remediation complexity. We rank gaps so your team can focus resources where they will reduce the most risk fastest.

Reporting and Defence Strategy

You receive a full audit report, an executive briefing, and a prioritised remediation plan. The report is structured to serve as evidence of proactive governance if the FMA, RBNZ, or Privacy Commissioner ever requests it.

Three Ways to Start

Every organisation is at a different stage. Pick the audit depth that matches your immediate need, then expand as priorities evolve.

Privacy Act Deep Dive

Targeted compliance review

A focused review of how your AI systems handle personal information against each of the 13 Information Privacy Principles. Includes automated decision-making transparency, cross-border data flows, and individual access rights.

- 13-principle compliance mapping

- Privacy Commissioner guidance alignment

- Mandatory breach notification readiness

Typical duration: 3-4 weeks

Full Governance Audit

End-to-end governance review

A comprehensive examination of your entire AI governance posture: strategy, policies, risk controls, monitoring, reporting, cultural considerations, and regulatory alignment. Scored against NZ and international benchmarks.

- Complete AI inventory and risk classification

- Treaty compliance and cultural impact assessment

- Multi-law obligation mapping (Privacy, Fair Trading, CoFI)

- Maturity scoring with NZ sector benchmarks

Typical duration: 6-8 weeks

Single System Review

Focused technical audit

A detailed examination of one specific AI system: how it was built, what data it uses, who it affects, whether its controls work, and how it performs against fairness and bias standards relevant to the NZ population.

- Model lineage and documentation audit

- Bias testing across NZ demographic groups

- Human oversight and override verification

Typical duration: 4-6 weeks per system

What Gets Delivered

Every deliverable is designed to do two things: give your leadership clarity on where you stand, and provide documented evidence of diligence if a regulator ever asks.

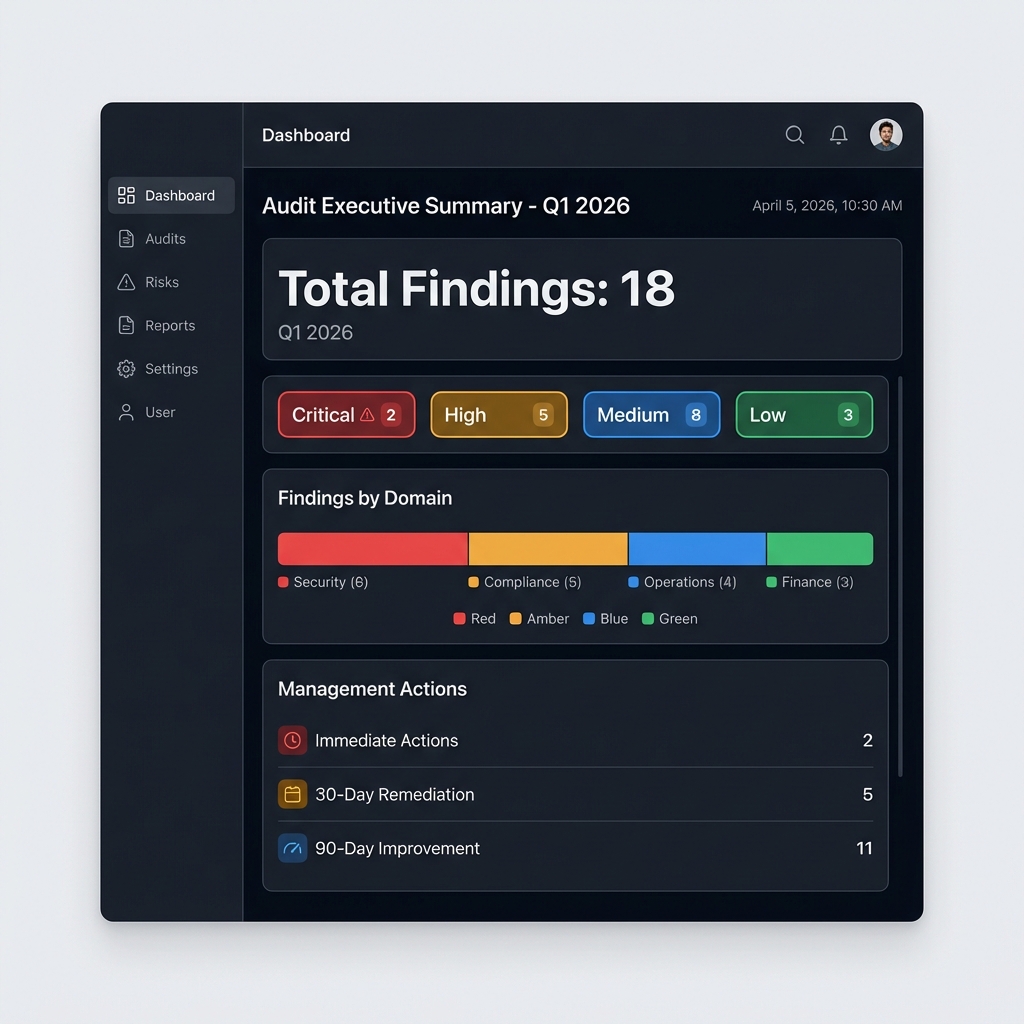

Audit Findings Report

Detailed findings with evidence, severity classification, and root cause analysis. Written for boards, audit committees, and regulatory correspondence.

Privacy Act 2020 Gap Analysis

A principle-by-principle assessment of how your AI systems perform against each of the 13 Information Privacy Principles, with specific remediation actions.

Treaty Compliance Assessment

Evaluation of Maori data handling practices, cultural impact considerations, and alignment with kaitiakitanga principles and data sovereignty expectations.

Public Service Framework Alignment

Mapping of your governance posture against the NZ Public Service AI Framework (February 2025) and the National AI Strategy, with gap identification and recommendations.

Prioritised Action Plan

A ranked list of remediation actions sorted by risk reduction impact, with ownership suggestions, effort estimates, and recommended sequencing.

Leadership Briefing

A concise presentation for your board or executive team covering risk exposure, key findings, and the three to five actions that will move the needle most.

Signs You Need This Audit

If any of these describe your organisation, the gaps in your AI governance are likely larger than you think.

You cannot list every AI tool your organisation uses

Teams adopt AI tools faster than governance can keep up. If your AI inventory is incomplete or non-existent, your risk exposure is unknown.

Your board has asked about AI risk and you could not answer precisely

25% of NZ leaders say governance is the "missing link" in their AI strategy. Directors need evidence-based answers, not reassurances.

You operate in a sector the FMA or RBNZ supervises

With 9 out of 10 NZ financial firms already using AI, regulators are building their understanding of industry practice. An audit positions you ahead of that curve.

Your AI systems process data about Maori or Pacific communities

Treaty obligations and data sovereignty principles create responsibilities that standard governance frameworks do not address. A culturally informed audit closes that gap.

You are scaling AI usage and want to do it responsibly

76% of NZ leaders are prioritising AI agents. Scaling without a governance baseline is how organisations end up in reactive crisis mode instead of proactive management.

You deliver public services or government contracts

The Public Service AI Framework sets clear expectations for government AI use. If you serve the public sector, alignment with this framework is becoming a baseline requirement.

Common Questions About AI Audits in NZ

What triggers the need for an AI audit in New Zealand?

There is no mandatory AI audit requirement in NZ law. The triggers are practical, not regulatory: your organisation is deploying AI at scale, a board or audit committee wants assurance, you are entering a regulated sector, you handle Maori or community data, or you want a defensible governance position before regulation catches up. Given that Gen AI could contribute 15%+ to NZ GDP by 2038, the organisations that build governance now will be the ones trusted to lead.

How do Treaty of Waitangi obligations factor into an AI audit?

Te Tiriti creates obligations around partnership, participation, and protection that directly affect how AI systems should handle Maori data and serve Maori communities. Our audit assesses whether your AI practices align with data sovereignty expectations, whether algorithmic outputs risk disproportionate impact, and whether governance structures include appropriate cultural oversight. This is not a box-ticking exercise -- it reflects principles of kaitiakitanga that are fundamental to responsible AI in Aotearoa.

What does the FMA expect from organisations using AI?

The FMA's 2024 research covered AI use across asset management, banking, financial advice, and insurance. While the FMA has not issued prescriptive AI rules, it expects firms to manage AI risks under their existing licence obligations -- including fair conduct, customer outcomes, and operational resilience. The RBNZ holds the same expectation. Our audit maps your practices against what these regulators look for during supervisory reviews.

We already have a privacy programme. Is that enough?

A privacy programme covers personal information handling, but AI introduces risks that privacy frameworks alone do not address: algorithmic bias, explainability of automated decisions, model drift, shadow AI adoption, and cultural impact. An AI audit assesses the full governance picture, with Privacy Act 2020 compliance as one critical component of a broader review.

Can we use the audit results if a regulator contacts us?

Yes, and that is one of the primary reasons organisations commission an audit. Our reports are structured to demonstrate proactive governance effort. If the FMA, RBNZ, or Office of the Privacy Commissioner requests evidence of your AI governance posture, the audit findings, gap analysis, and remediation plan serve as documented proof that your organisation took responsible steps.

After the Audit

An audit tells you where the gaps are. These services close them.

AI Governance Consulting

Turn audit findings into a working governance framework with clear accountabilities, processes, and controls.

Learn more →AI Risk Assessment

Build the risk taxonomy, assessment methodology, and monitoring approach your audit identified as missing.

Learn more →Privacy Act 2020 Compliance

Address the specific Privacy Principle gaps identified in your audit with targeted remediation and documentation.

Learn more →Book Your AI Audit and Assessment

Get an independent, NZ-specific AI audit that covers Privacy Act compliance, Treaty obligations, FMA and RBNZ expectations, and international best practice. Know exactly where you stand and what to fix first.