AI Governance for Australian Technology Companies

Australian tech companies are adopting AI rapidly. Privacy Act amendments take effect 10 December 2026. VCs include governance in due diligence. Product liability laws are being reformed. Governance frameworks help navigate these requirements.

Tech Companies Face a Double Challenge

You're building AI products AND using AI internally. Each creates different governance obligations.

"Are we liable for our AI product's decisions?"

Australian Consumer Law applies strict liability to AI systems. The Government is consulting on product liability reform. When your AI makes a mistake that harms a user, who's responsible?

"What do we tell customers about their data?"

Privacy Act 2024 requires disclosure of automated decision-making by 10 December 2026. If you're training models on customer data or using AI in your product, transparency obligations apply.

Develop privacy policies →"VCs are asking about our AI governance"

Due diligence now includes AI governance questions. VCs want to see you're managing AI risk, have privacy controls, and won't face regulatory issues post-investment.

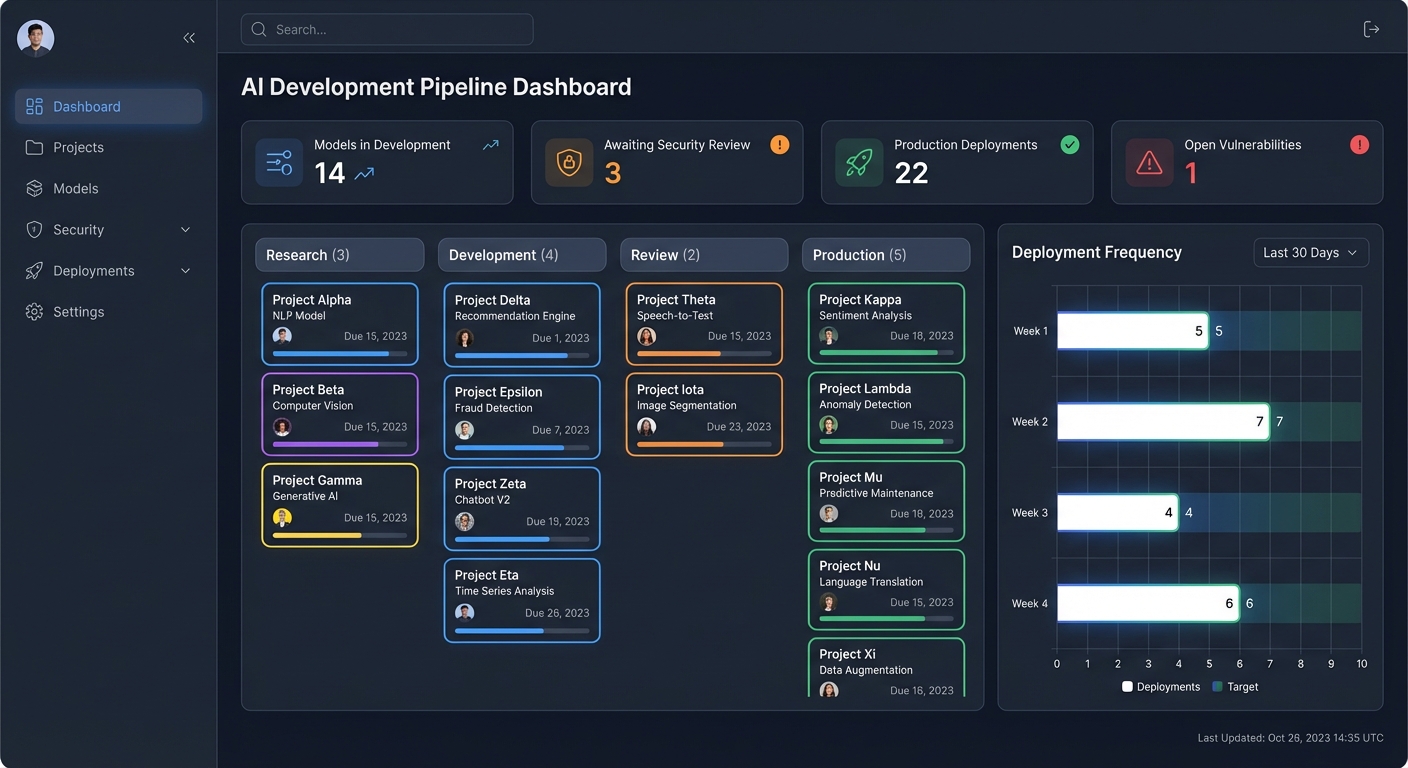

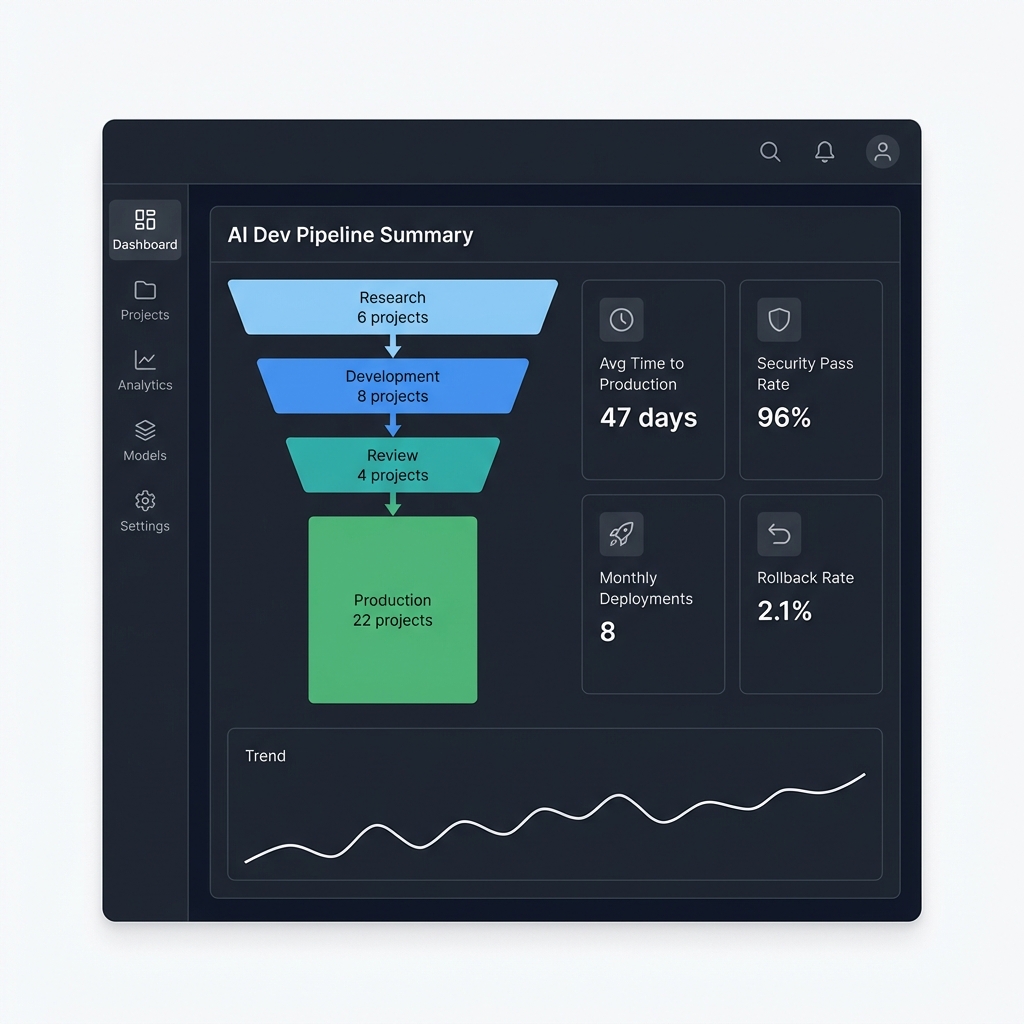

AI as Product vs AI as Tool

Different use cases create different governance requirements. Both need attention.

Building AI Products

Your AI is the product customers buy

If you're developing AI-powered SaaS, APIs, or software products, you face product liability, customer privacy obligations, and potential regulatory compliance.

Key Risks:

- Product liability for AI decisions that cause harm

- Privacy obligations for processing customer data

- Algorithmic bias creating discriminatory outcomes

- Transparency requirements for automated decisions

- EU AI Act compliance if selling into Europe

Using AI Internally

AI tools in your operations

Using ChatGPT, GitHub Copilot, or other AI tools internally? You're processing company data, creating IP ownership questions, and introducing operational risks.

Key Risks:

- Data leakage through public AI models

- IP ownership uncertainty for AI-generated code

- Shadow AI use without security review

- Code quality issues from over-reliance on AI

- Privacy Act obligations for employee monitoring

Regulatory Requirements for Tech Companies

Australia doesn't have AI-specific regulation yet, but existing laws already apply to AI systems.

Privacy Act 2024 Amendments

Automated decision-making transparency

- Must disclose automated decision-making in privacy policies

- Explain which decisions use AI and what data is processed

- Enhanced data quality obligations for AI training data

- Penalties for serious or repeated privacy violations

Australian Consumer Law

Product liability for AI systems

- Strict liability applies to AI product manufacturers

- Government consulting on AI product liability reform

- Liability questions for autonomous AI systems

- Complexity when multiple parties involved in AI supply chain

OAIC AI Guidance (October 2024)

Privacy regulator expectations

- Guidance on using commercially available AI products

- Requirements for developing and training generative AI

- Accuracy, transparency, and data quality emphasis

- Secondary use restrictions for training data

EU AI Act (If Selling Into Europe)

Extraterritorial application

- Applies to Australian companies with EU customers

- High-risk AI systems require compliance by August 2026

- Significant penalties for non-compliance with high-risk AI requirements

- Risk classification determines compliance obligations

VCs Are Asking About AI Governance

Australian tech companies continue to attract venture capital investment. ESG considerations are increasingly important, and AI governance is part of that conversation.

Compliance Due Diligence

VCs review your Corporations Act compliance, Australian Consumer Law adherence, privacy controls, and employment law compliance. AI governance gaps create deal risk.

Risk Management

AI risk management is a key concern for business leaders and investors. Investors want to see you've identified AI risks, have mitigation plans, and won't face regulatory issues post-investment.

Responsible Innovation

ESG considerations are increasingly important. VCs fund companies demonstrating sustainability practices and responsible AI development. Governance frameworks signal maturity.

AI Governance Services for Tech Companies

Governance frameworks designed for startups and scale-ups building AI products or using AI internally.

Product AI Governance

- • Product liability risk assessment

- • Bias testing and fairness validation

- • Customer transparency requirements

- • Model monitoring and performance tracking

Privacy Compliance

- • Privacy Act 2024 compliance roadmap

- • Automated decision-making disclosures

- • Training data privacy frameworks

- • Cross-border data transfer compliance

Internal AI Policies

- • Acceptable use policies for AI tools

- • Code of conduct for AI-generated code

- • Data leakage prevention guidelines

- • IP ownership frameworks

VC Due Diligence Preparation

- • Investment-ready governance documentation

- • Risk assessment and mitigation plans

- • Compliance gap remediation

- • Board-ready AI governance frameworks

EU AI Act Readiness

- • Risk classification assessment

- • High-risk system compliance planning

- • Documentation and record-keeping

- • Market entry strategy for EU expansion

AI Audits & Assessments

- • Current state governance review

- • Regulatory compliance gap analysis

- • Shadow AI discovery and inventory

- • Risk prioritisation and roadmapping

Don't Let Governance Slow Down Innovation

Get ahead of Privacy Act 2026 requirements, prepare for VC due diligence, and build governance frameworks that scale with your product. Start with a free consultation.