AI Risk Framework Development

Build comprehensive AI risk frameworks that integrate with your existing enterprise risk management, satisfy APRA requirements, and provide board-ready reporting.

We develop AI-specific risk taxonomies, assessment methodologies, and controls libraries aligned to NIST AI RMF, ISO 42001, and Australian regulatory expectations.

The Challenge

AI introduces novel risks that don't fit neatly into traditional risk categories. Most organisations struggle to classify, assess, and manage AI-specific risks within existing frameworks.

Missing Risk Taxonomy

Generic enterprise risk categories don't capture AI-specific risks like model drift, training data bias, hallucinations, or third-party AI vendor dependencies.

Assessment Complexity

Traditional risk assessment methods don't account for dynamic AI behaviour. A model performing well today may degrade silently over time without proper monitoring.

Control Gaps

Existing IT controls weren't designed for AI systems. Organisations lack controls for bias testing, explainability validation, and model performance monitoring.

"Nearly half of the licensees we reviewed do not have a policy on fairness and bias for their AI use... The frameworks of AI governance tended to be less mature for generative AI than for predictive AI."

- ASIC REP 798: Beware the Gap (October 2024), reviewing AI governance at 23 AFS and credit licensees

Our Methodology

We build risk frameworks that integrate seamlessly with your existing enterprise risk management. No parallel governance structures - AI risk becomes part of how you already manage risk.

Aligned to Leading Standards

Risk Taxonomy Development

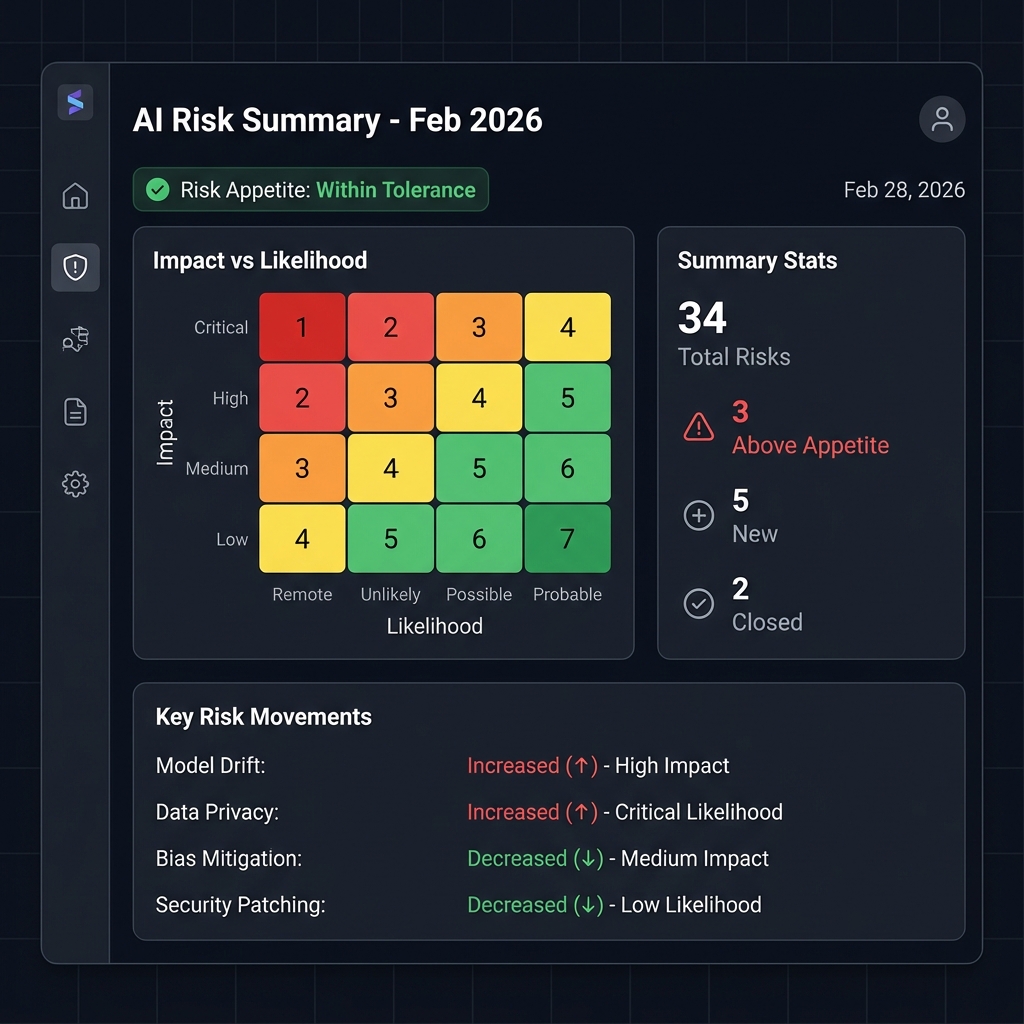

We create a comprehensive AI risk classification system covering technical risks (model performance, drift, bias), operational risks (availability, integrity), legal risks (liability, privacy), and strategic risks - mapped to your existing enterprise risk categories.

Assessment Methodology Design

We develop structured assessment approaches for different AI use cases: credit risk models, fraud detection, customer service, generative AI. Each methodology includes materiality thresholds aligned to your risk appetite.

Controls Library Creation

We build a library of 50+ AI-specific controls: preventive (data validation, access management), detective (performance monitoring, drift detection), corrective (retraining triggers, incident response), and governance controls (approval workflows, audit trails).

Three Lines Integration

We define clear responsibilities across the three lines of defence: first line (development standards, testing), second line (independent validation, compliance), and third line (internal audit). No gaps, no duplication.

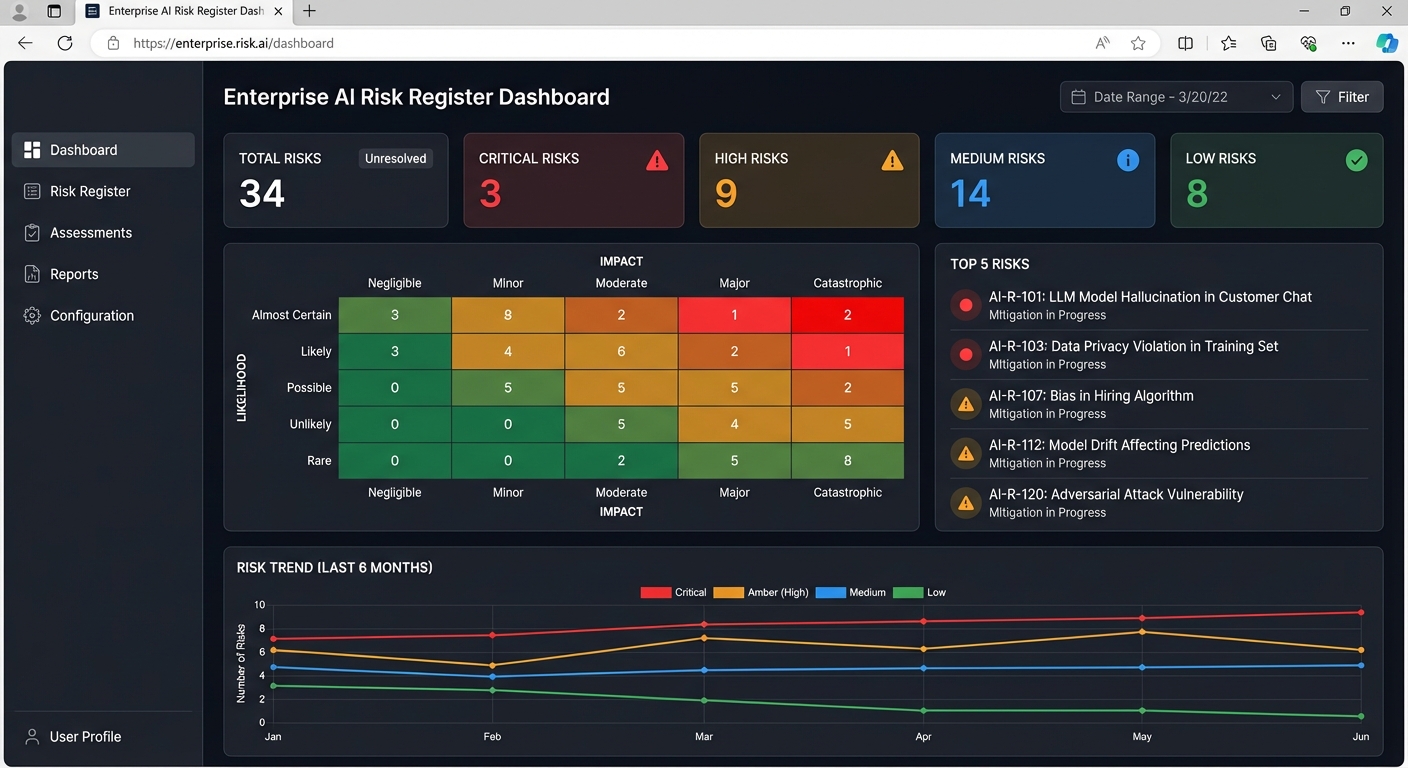

Board Reporting Framework

We create Key Risk Indicators (KRIs) and board-level dashboards that communicate AI risk in terms executives understand. Clear escalation pathways and decision rights documentation.

What You Receive

Practical deliverables designed for operationalisation, not just documentation.

AI Risk Taxonomy

Comprehensive classification of AI risks tailored to your organisation and Australian regulatory context. Document plus Excel taxonomy for GRC integration.

Assessment Methodology

Step-by-step methodology for assessing AI risks across the lifecycle. Includes templates for quantitative and qualitative assessment.

AI Risk Register

Pre-populated risk register with common AI risks, control mappings, and assessment fields. Excel or GRC-compatible format.

Controls Library

50+ AI-specific controls mapped to risk categories and NIST AI RMF functions (Govern, Map, Measure, Manage). Includes policy templates.

Three Lines Framework

Roles, responsibilities, and operating model for AI risk governance across first, second, and third lines of defence.

Board Reporting Pack

Templates for AI risk reporting to board and risk committee. Includes KRI definitions, dashboard designs, and escalation protocols.

Who This Is For

This service is designed for Chief Risk Officers and risk leaders who need to integrate AI risk into existing enterprise risk management without creating parallel governance structures.

APRA-Regulated Entities

Banks, insurers, and superannuation trustees preparing for CPS 230 compliance.

Financial Services Licensees

AFS and credit licensees responding to ASIC REP 798 governance expectations.

Enterprise Risk Teams

Organisations with mature ERM programs that need to extend coverage to AI systems.

Board and Audit Committees

Directors seeking assurance that AI risks are properly identified, assessed, and governed.

Frequently Asked Questions

How does this integrate with our existing ERM framework?

We design AI risk frameworks to complement your existing enterprise risk management, not replace it. AI risks are categorised within your existing risk taxonomy where possible, with new categories only where AI-specific risks genuinely differ.

Does this satisfy APRA CPS 230 requirements?

Yes. Our frameworks are specifically designed for APRA-regulated entities. We map all AI risks to CPS 230 operational risk management requirements and include material service provider assessment for third-party AI vendors.

What about generative AI risks?

Our risk taxonomy includes generative AI-specific risks: hallucinations, prompt injection, data leakage, intellectual property concerns, and model output accuracy. We provide specific assessment methodologies for generative AI use cases.

How long does the engagement take?

Typical engagements run 10-16 weeks depending on scope: Discovery (2-3 weeks), Design (4-6 weeks), Validation (2-3 weeks), and Delivery (2-4 weeks). We can accelerate for CPS 230 deadline requirements.

Related Services

AI Governance Consulting

Comprehensive governance program design including operating models and committee structures.

Learn more →AI Audit & Assessment

Independent assessment of your current AI governance maturity and regulatory readiness.

Learn more →ISO 42001 Certification

Implementation consulting for the international AI management system standard.

Learn more →Build Your AI Risk Framework

Schedule a consultation to discuss your AI risk management requirements and how we can help you build a framework that satisfies regulators and protects your organisation.