AI Governance for Australian Healthcare Providers

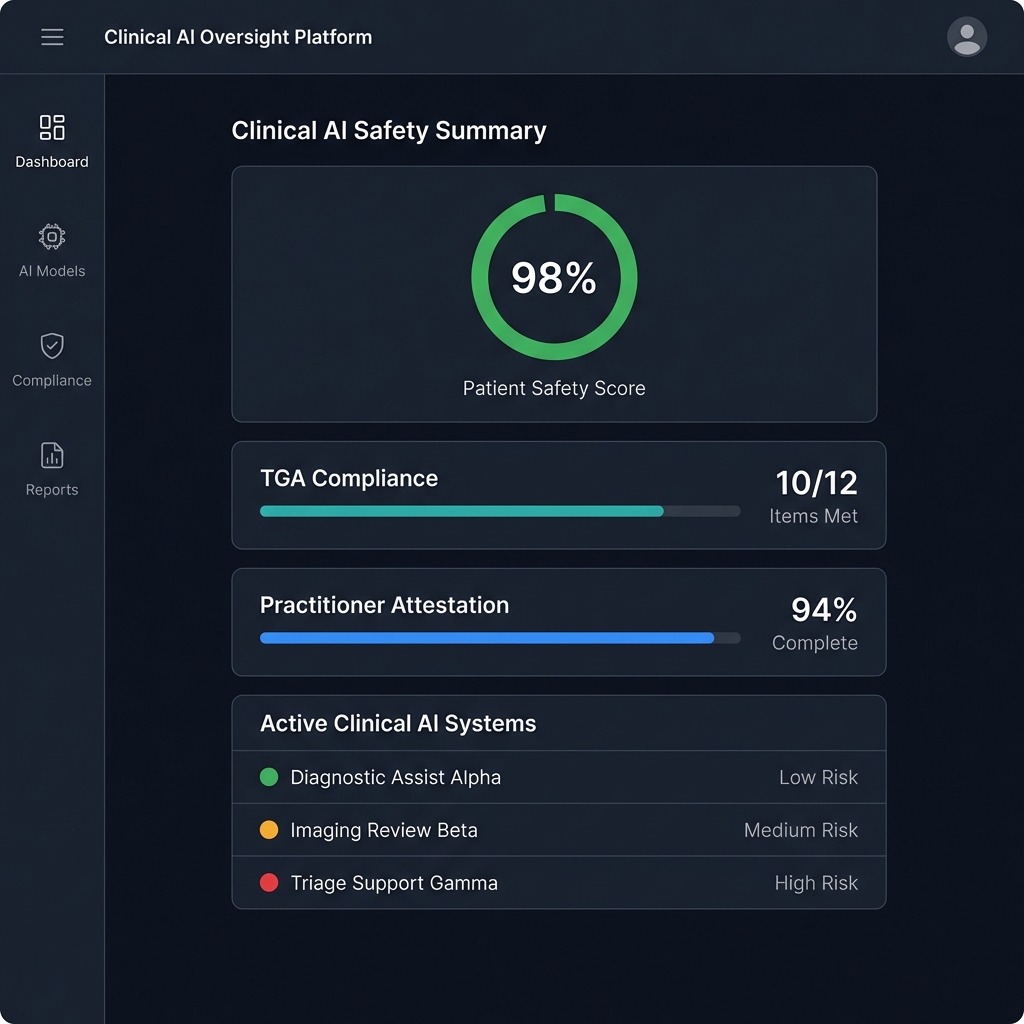

Australian hospitals and healthcare providers are adopting AI-powered systems. TGA is tightening medical device regulations. AHPRA establishes professional obligations for practitioners using AI-assisted decisions. Governance frameworks help navigate these requirements.

Sound Familiar?

Healthcare AI is different. Patient safety, practitioner liability, and multiple overlapping regulators make governance complex.

"Is our AI software a regulated medical device?"

TGA's grace period ended November 2024. Clinical decision support, AI scribes with diagnostic features, and predictive tools may require ARTG registration. Getting classification wrong creates serious legal exposure.

"What are our practitioners' AI obligations?"

AHPRA is clear: practitioners remain "ultimately responsible" for AI used in their practice. That includes checking AI scribe accuracy, understanding bias risks, and ensuring proper consent. 1 in 4 GPs are already using AI scribes.

Develop practitioner guidelines →"How do we protect patient data in AI systems?"

Health information is "sensitive information" under the Privacy Act. AI scribes process consultation recordings. AI models may be trained on patient data. The National Health Privacy Rules 2025 add new requirements.

Healthcare AI Has Multiple Regulators

No single framework covers healthcare AI. You need to navigate TGA, AHPRA, OAIC, and ACSQHC requirements simultaneously.

Therapeutic Goods Administration

Medical device regulation

- SaMD classification and ARTG registration

- AI scribes with diagnostic features under review

- Adaptive AI and LLMs creating new compliance questions

Health Practitioner Regulation Agency

Professional obligations

- Practitioners "ultimately responsible" for AI in their practice

- Must understand AI bias risks for vulnerable populations

- Professional indemnity insurance must cover AI use

Office of the Australian Information Commissioner

Privacy and data protection

- Health information is "sensitive information" under APPs

- Cross-border data flows (APP 8) for offshore AI providers

- My Health Record mandatory breach notification

Commission on Safety and Quality in Health Care

Clinical governance

- AI implementation guides for clinicians (August 2025)

- NSQHS Standards integration requirements

- "Before-while-after" clinical governance framework

Healthcare AI Use Cases Need Governance

Each AI application has different risk profiles and regulatory requirements. Governance must be use-case specific.

AI Scribes

Adoption of AI scribes is increasing rapidly. Recording consent, accuracy verification, and privacy obligations require clear policies.

High adoption, variable governanceDiagnostic Imaging

Machine learning analysis of medical images is widespread. AI-assisted imaging systems demonstrate high accuracy and are likely TGA-regulated medical devices.

TGA registration likely requiredClinical Decision Support

Predicting patient deterioration, treatment recommendations, and triage support. TGA regulates software suggesting diagnosis or treatment.

TGA registration likely requiredOperations & Resource Planning

Bed management, staffing optimisation, supply chain. Lower regulatory risk but still requires data governance and privacy compliance.

Lower regulatory burdenHealthcare AI Risks Are Different

Patient safety, algorithmic bias, and practitioner liability create unique governance challenges that generic AI frameworks don't address.

Algorithmic Bias

AI trained on non-Australian or non-diverse data may not perform well locally. AHPRA specifically requires addressing biases affecting Aboriginal and Torres Strait Islander communities.

AI Scribe Privacy Risks

Recording consultations without consent is a criminal offence. Third-party AI processing of health data requires careful privacy engineering. Secondary data use for AI training may not be anticipated by patients.

Liability Uncertainty

Royal Australian College of Surgeons has called for updates to civil liability frameworks. When AI documentation leads to negligence claims, liability allocation remains unclear.

Healthcare AI Governance Services

Governance frameworks designed for healthcare's unique regulatory environment and clinical accountability requirements.

TGA Regulatory Support

- • SaMD classification assessment

- • ARTG registration support

- • Clinical evidence requirements

- • Post-market surveillance frameworks

Clinical Governance Frameworks

- • ACSQHC-aligned governance models

- • NSQHS Standards integration

- • Human oversight protocols

- • Clinical validation processes

Privacy & Data Governance

- • Privacy Act compliance assessment

- • My Health Record obligations

- • Cross-border data transfer compliance

- • Secondary data use frameworks

Practitioner Guidance

- • AHPRA obligations translation

- • AI scribe implementation policies

- • Patient consent frameworks

- • Bias awareness training

Health Tech Support

- • TGA pathway assessment

- • MVP compliance frameworks

- • Market entry strategy

- • Investment-ready governance

AI Audit & Assessment

- • Current state AI governance review

- • Regulatory compliance gap analysis

- • Bias and fairness assessment

- • Risk assessment and prioritisation

Healthcare AI Is Growing Faster Than Governance

Don't wait until TGA, AHPRA, or OAIC asks questions. Get ahead of healthcare AI regulatory requirements with governance frameworks designed for clinical accountability.