DTA Policy 2.0 Is In Force. Is Your Agency Compliant?

The Policy for Responsible Use of AI in Government requires designated accountable officials, transparency statements, and risk-based governance. Audits have identified gaps in data ethics assessments across government AI systems. Governance frameworks help address these requirements.

Public Sector AI Governance Is Different

Multi-layered requirements, heightened scrutiny, and accountability to citizens create unique governance challenges.

"We need to publish a transparency statement"

DTA requires non-corporate Commonwealth entities to publish AI transparency statements on public-facing websites. Plain language, clear explanation of AI use, annual updates. February 2025 deadline has passed - have you published yours?

"Who is accountable for our AI?"

Every agency needs designated accountable officials with contact details provided to DTA. Post-Robodebt, accountability for automated decisions is under intense scrutiny. Clear roles and responsibilities aren't optional.

Define accountability structures →"How do we assess AI risk?"

DTA's AI Impact Assessment Tool requires agencies to identify, assess, and manage risks against Australia's AI Ethics Principles. High-risk use cases need AI Review Committee preparation. Do your teams know how to complete these assessments?

Build risk assessment capability →DTA Policy Requirements at a Glance

The Policy for Responsible Use of AI in Government (Version 2.0) mandates specific actions for all non-corporate Commonwealth entities.

Strategic AI Approach

Agencies must develop a strategic approach to adopting AI aligned with organisational goals and the "enable, engage and evolve" framework.

Operationalise Responsible AI

Establish an approach to operationalise responsible AI use through governance structures, policies, and procedures.

Designated Accountability

Ensure designated accountability for AI use cases with accountable officials identified and contact details provided to DTA.

Risk-Based Actions

Undertake risk-based use case-level actions using DTA's AI Impact Assessment Tool to assess risks against Australia's AI Ethics Principles.

Transparency Statements

Publish AI transparency statements on public-facing websites using clear, plain language consistent with Australian Government Style Manual.

Mandatory AI Training

AI fundamentals training is now mandatory for all APS staff to ensure baseline understanding of responsible AI use.

State Government Requirements Vary

NSW, Victoria, and Queensland have distinct AI governance frameworks. Multi-jurisdictional agencies need to navigate all of them.

NSW AI Assessment Framework

Mandatory under Circular DCS-2024-04 for all NSW Government Agencies. High or Critical use cases must be referred to AI Review Committee.

- • Five overarching principles: Trust, Transparency, Customer Benefit, Fairness, Privacy and Accountability

- • AI Agent Usage and Deployment Guidance (2025)

- • Digital Assurance Framework integration

Victorian Administrative Guideline

Safe and Responsible Use of Generative AI in the Victorian Public Sector (June 2025). Applies to all public service bodies under Public Administration Act 2004.

- • Victorian Charter of Human Rights compatibility required

- • "Decisions affecting customers will always be made by a human"

- • Data protection, cybersecurity, and transparency domains

Queensland AI Governance Policy

First jurisdiction nationally to mandate ISO 38507 compliance for public sector AI governance. FAIRA framework for risk assessment.

- • ISO 38507 (AI governance by organisations) mandatory

- • Foundational AI Risk Assessment (FAIRA) Framework

- • QChat secure generative AI platform

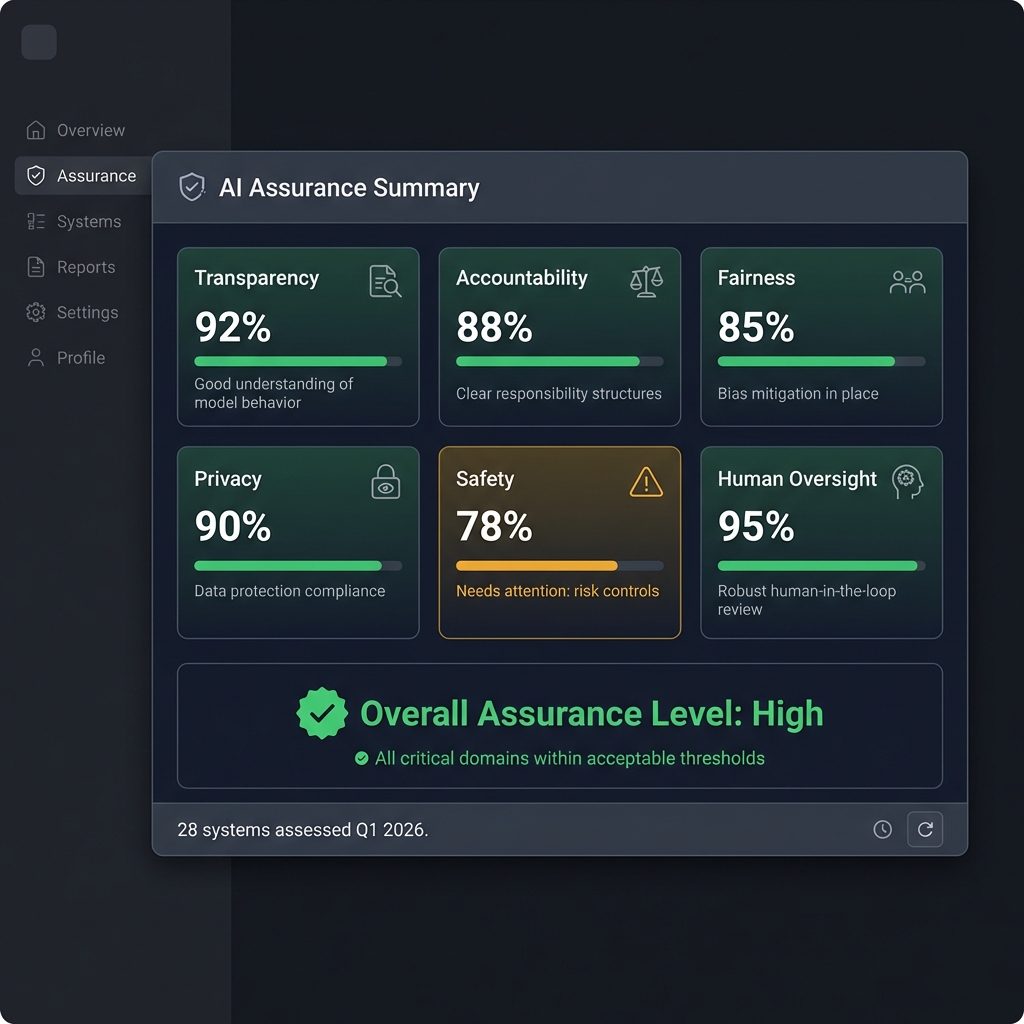

National Framework for AI Assurance

Endorsed by all Australian governments in June 2024, the National Framework establishes five cornerstones for AI assurance across the public sector.

Governance

Organisational structure, policies, processes, roles, responsibilities and risk management frameworks.

Data Governance

Standards and practices for data quality, integrity, and management throughout the AI lifecycle.

Standards

Alignment with national and international AI standards including ISO frameworks.

Procurement

AI ethics and assurance requirements in procurement and contracts with clearly established vendor accountabilities.

Risk-Based Approach

Proportionate governance measures based on the risk level of AI applications.

Automated Decision-Making Is Under Scrutiny

Post-Robodebt, government use of automated decision-making faces intense public and regulatory attention.

Robodebt Legacy

The Royal Commission recommended reforms for a consistent framework ensuring ADM is used fairly and transparently. ADM systems must be fit for purpose, lawful, fair, and not adversely affect human and legal rights.

Services Australia now commits: "No AI used to process claims. Final decisions always made by humans."

Reform in Progress

Attorney-General's Department consultation on ADM reforms closed January 2025. Proposals include improved governance, consistent legal framework, enhanced transparency, and protections for personal information.

OAIC recommends express obligations for agencies to proactively publish information about ADM use.

Government AI Governance Services

Purpose-built services for public sector accountability, transparency, and citizen trust requirements.

Transparency Statement Support

- • Gap analysis against DTA requirements

- • Plain language statement drafting

- • Annual review and update support

- • Public communication strategy

AI Risk Assessment Services

- • Impact assessment completion

- • Risk classification mapping

- • High-risk use case documentation

- • AI Review Committee preparation

Governance Framework Development

- • AI strategy aligned with DTA requirements

- • Governance structure design

- • Accountable official role definition

- • Cross-functional AI committees

Training & Capability Building

- • DTA-compliant AI fundamentals training

- • Executive briefings

- • Technical team training

- • Ethics and responsible AI workshops

Procurement Support

- • AI Model Clauses implementation

- • Vendor assessment frameworks

- • Contract negotiation support

- • Due diligence checklists

State-Specific Compliance

- • NSW AIAF compliance assessments

- • Victorian Guideline implementation

- • Queensland ISO 38507 alignment

- • Multi-jurisdictional coordination

Citizens Expect Accountability. Regulators Demand It.

Don't wait for an ANAO audit finding or media scrutiny. Get your agency's AI governance ahead of requirements with frameworks designed for public sector accountability.