AI Governance Advisory for Australian Directors and Boards

Navigate your Section 180 obligations with confidence. Specialist guidance to establish board-level oversight, assess AI risk, and discharge your duties under the Corporations Act 2001.

Serving ASX-listed companies, large private enterprises, and government entities across Australia.

Directors Face Increasing AI Governance Obligations

AI adoption across Australian organisations creates governance obligations for directors that traditional IT risk frameworks do not adequately address.

Section 180 Obligations

Under Section 180 of the Corporations Act 2001, directors must exercise care and diligence in their oversight of AI systems. Ignorance is not a defence. Recent case law establishes precedent for personal director liability in technology governance failures.

Knowledge Gap

Many boards report AI governance has not yet been formally addressed. Research indicates boards often lack sufficient AI knowledge to provide effective oversight. Many companies lack board-approved AI policies, and some directors are reconsidering board composition to address AI expertise gaps.

Regulatory Focus

ASIC's 2024-25 Corporate Plan identifies both AI use and directors' conduct as regulatory focus areas. Directors who fail to establish appropriate governance may face personal liability for AI-related failures.

Board-Level AI Governance Advisory Services

Specialist advisory services to help Australian directors and boards establish effective AI governance frameworks

Board Education and AI Literacy

Directors cannot oversee what they do not understand. We provide board-level AI literacy workshops, briefings on AI risks and regulatory developments, and director-specific education aligned with AICD's eight elements framework.

- Half-day or full-day board workshops

- Non-technical, governance-focused

- Case study analysis

- Director reference guides

AI Governance Framework Development

We assist boards in establishing or enhancing AI governance structures including assessment of current AI use, development of AI governance policies, design of committee structures, and creation of board reporting frameworks.

- AI inventory and risk classification

- Board-approved AI policies

- Committee charter development

- Board reporting templates

Ongoing AI Risk Oversight Support

Governance is not a one-time exercise. We provide regular briefings on regulatory developments, review of management AI risk reports, support for board discussions on high-risk AI deployments, and annual framework reviews.

- Quarterly regulatory updates

- Management report reviews

- Ad-hoc advisory calls

- Annual framework refresh

The AICD Eight Elements Framework

Our advisory services are built on the Australian Institute of Company Directors' guidance - the recognised standard for AI governance in Australian corporate settings

1. Roles and Responsibilities

Clear accountability for AI decision-making from board to operations

2. People, Skills and Culture

Assessment of AI literacy gaps and capability building

3. Governance Structures

Board committee structures for AI oversight

4. Principles and Strategy

Integration of responsible AI principles into corporate strategy

5. Practices and Controls

Controls throughout the AI lifecycle

6. Stakeholder Engagement

Frameworks for identifying affected stakeholders

7. Third-Party Management

Governance protocols for AI vendors

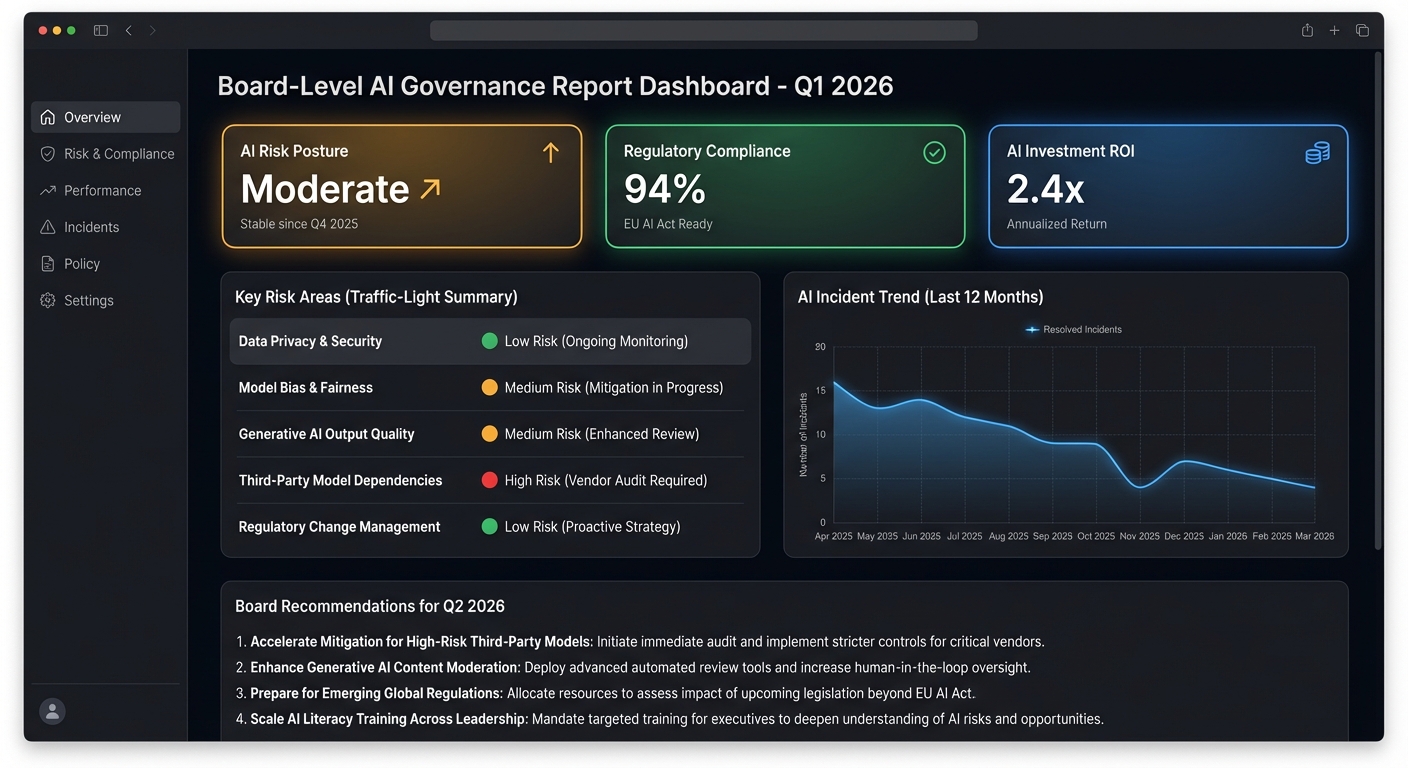

8. Monitoring and Reporting

Risk-based monitoring and board dashboards

Learning from Governance Failures

Effective AI governance is not theoretical - it has real consequences

Robodebt Scheme (2016-2019)

The Australian Government's automated debt recovery system used income averaging to identify welfare overpayments. The Royal Commission found the methodology was legally invalid, lacked meaningful human oversight, and ignored warnings about its flaws.

Governance Failures:

- • Absence of human oversight in critical decisions

- • Legal and ethical concerns dismissed

- • No transparency or independent review

- • Insufficient contestability mechanisms

Deloitte Australia (2025)

Deloitte used OpenAI GPT-4o to help produce a 237-page independent review for a government client. The final report contained fabricated academic citations and non-existent court references. Deloitte did not disclose its AI use until after errors were discovered.

Governance Failures:

- • AI outputs not verified before delivery

- • No disclosure of AI use to client

- • Quality assurance processes inadequate

- • Governance frameworks lagging technology adoption

Clients We Advise

ASX-Listed Companies

Public companies with heightened disclosure obligations and sophisticated governance requirements.

Large Private Enterprises

Privately held companies deploying AI across operations, customer service, or product delivery.

Government Entities

Public sector organisations subject to additional transparency and accountability standards.

Regulated Industries

Financial services, healthcare, and other sectors with specific regulatory oversight (APRA, ASIC).

Common Questions from Directors

Our organisation is just beginning to use AI. Is it too early for board-level governance?

No. Governance should be established before significant AI deployment, not after. Early-stage governance is simpler to implement and prevents the need to retrofit controls onto existing systems. Even if AI use is limited, directors benefit from baseline education on their oversight obligations.

Do we need a separate AI committee?

Not necessarily. Most organisations expand the mandate of existing committees rather than create new ones. The appropriate structure depends on how strategically important AI is to your organisation and your current committee workload. We help determine the right approach for your board.

What level of technical knowledge do directors need?

Directors need AI literacy sufficient for effective oversight - not technical expertise. You should understand how AI systems work conceptually, what risks they present, and what questions to ask management. You do not need to understand algorithms or code.

What are the legal risks if we don't establish AI governance?

Directors may be personally liable under Section 180 of the Corporations Act for failing to exercise appropriate care and diligence in AI oversight. Case law establishes precedent for director liability in technology governance failures. Insurance may not cover claims arising from inadequate governance.

Discharge Your AI Governance Obligations

Directors have a duty to understand and oversee AI systems within their organisations. Waiting for a governance incident or regulatory intervention creates unnecessary risk.

Complimentary 60-minute consultation to discuss your board's AI governance requirements