Independent AI Impact Assessment for Australian Organisations

Australian regulators expect organisations to demonstrate governance over AI systems. APRA CPS 230 requires operational risk management frameworks that include AI, while ASIC reporting has identified governance gaps across financial services.

We provide independent AI assessments that identify risks, validate compliance, and deliver board-ready recommendations.

AI Adoption Is Outpacing Governance

Regulatory reporting has revealed governance gaps across Australian organisations deploying AI systems.

Common governance gaps identified by regulators:

- Insufficient policies addressing algorithmic bias and consumer fairness

- AI adoption outpacing governance framework development

- Third-party AI model risks not adequately assessed

- Decentralised approaches without strategic oversight

Organisations deploying AI across multiple business units can benefit from independent assessment to identify governance gaps and establish appropriate controls.

Shadow AI

AI systems operating without central oversight. Marketing deployed a chatbot. Finance built a forecasting model. Operations automated document processing. Nobody coordinated. Your board asks how many AI systems you have, and you cannot answer with confidence.

Regulatory Expectations

APRA CPS 230 requires operational risk management frameworks that include AI systems. The Financial Accountability Regime establishes accountability obligations for directors and senior executives. Internal audit teams often require specialist expertise to assess AI-specific risks.

Board Questions

Your audit committee wants assurance that AI systems operate fairly, comply with regulatory expectations, and won't create reputational risk. Internal teams cannot provide independent validation. You need third-party assessment.

Independent AI Assurance for Australian Regulatory Requirements

We conduct AI impact assessments designed for ASIC, APRA, and Australian Government framework compliance. Our assessments identify governance gaps, validate controls, and provide board-ready recommendations.

Regulatory Confidence

Address governance expectations and demonstrate APRA CPS 230 compliance. Our assessments map findings directly to regulatory requirements, giving your board and audit committee the assurance they need.

Complete Visibility

Discover shadow AI, validate third-party vendor controls, and build a complete inventory of your AI estate. We find the models and systems that escaped central governance processes.

Independent Assurance

Third-party validation provides credible findings for regulatory discussions and board reporting. Our independence ensures assessments are objective and thorough.

Comprehensive AI Governance Review

AI Governance Framework

We evaluate your governance arrangements against regulatory expectations and APRA CPS 230 requirements. Assessment includes strategic oversight structures, policy coverage, board reporting mechanisms, and accountability frameworks.

AI Model Inventory

We discover and document all AI systems operating in your organisation, including shadow AI that bypassed approval processes. Each model is catalogued with ownership, purpose, data sources, risk tier, and deployment status.

Bias and Fairness Testing

For high-risk AI systems, we conduct algorithmic bias testing using industry-standard fairness metrics. We evaluate whether models discriminate across protected characteristics and whether they treat consumers fairly (a specific ASIC concern).

Third-Party AI Vendor Assessment

Many organisations overlook risks from third-party AI embedded in vendor solutions. We evaluate your AI vendor governance and identify vendor-related risks aligned to CPS 230 material service provider requirements.

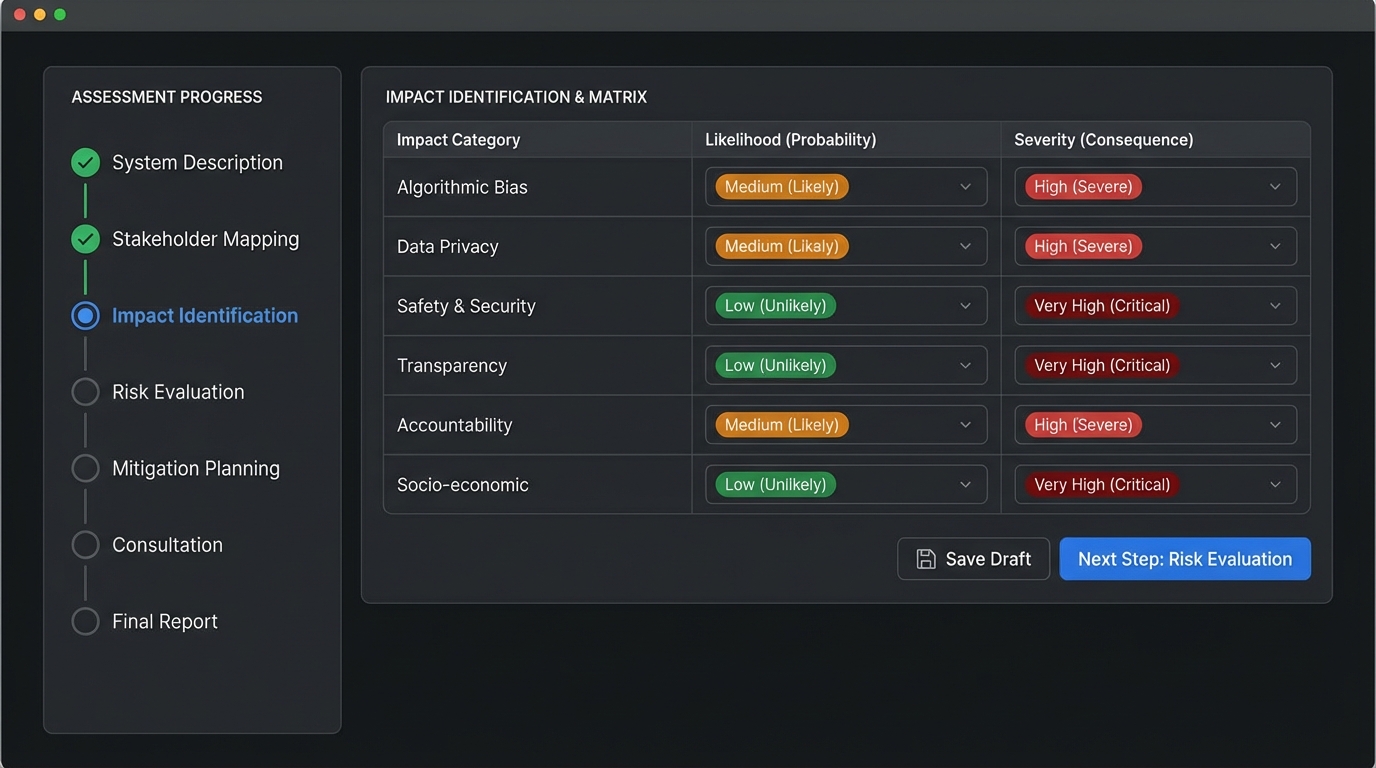

Risk Assessment and Controls

We identify risks across your AI portfolio using a framework aligned to APRA and ASIC expectations. Risks are assessed from both operational and consumer impact perspectives. We test whether governance controls are designed well and operating effectively.

Regulatory Compliance

We map your current state against ASIC REP 798, APRA CPS 230, Privacy Act requirements, and relevant Government AI frameworks. You receive a compliance gap analysis with remediation priorities.

Our Assessment Process

Discovery & Planning (1-2 weeks)

We interview key stakeholders (CRO, CAE, CDO, model owners) to understand your AI landscape. We map use cases, identify third-party systems, and scope the assessment based on your regulatory context and risk profile.

Assessment & Testing (2-6 weeks)

Evaluation of governance frameworks, model documentation, and controls. Where needed, we conduct algorithmic bias testing, fairness analysis, and control effectiveness reviews. All evidence collection follows professional audit standards.

Analysis & Reporting (1-2 weeks)

Findings are consolidated into a board-ready report with executive summary, detailed observations, root cause analysis, and recommendations mapped to ASIC and APRA expectations.

Delivery & Knowledge Transfer (1 week)

Final report delivery includes presentations to audit committees and executive teams. We provide management action plan support and knowledge transfer sessions to build your team's ongoing capability.

What You Receive

Board-Ready Assessment Report

- Executive summary for audit committees and boards

- Findings with risk ratings and root cause analysis

- Mapped to regulatory expectations and APRA CPS 230

AI Model Inventory

- Complete catalogue including shadow AI

- Risk classifications and ownership documentation

- Third-party vendor mapping (CPS 230 aligned)

Gap Analysis & Remediation Roadmap

- Current state vs. regulatory requirements

- Action plan with timelines and priorities

- Aligned to regulatory deadlines

Knowledge Transfer Sessions

- Workshops with internal audit and risk teams

- AI audit methodologies and best practices

- Capability building for internal teams

Common Questions

What is an AI impact assessment?

An AI impact assessment is an independent evaluation of your organisation's AI systems, governance frameworks, and controls. It examines whether AI operates effectively, fairly, and in compliance with regulatory expectations including APRA CPS 230 and Privacy Act requirements.

Why do we need an independent assessment?

Regulatory reporting has shown AI adoption often outpaces governance development in Australian organisations. An independent assessment provides objective assurance to boards, regulators, and stakeholders that AI risks are appropriately managed. External expertise is valuable given the specialist knowledge required to audit AI systems.

How long does an assessment take?

Assessments typically range from 2-8 weeks depending on scope and complexity. A focused gap assessment takes 2-4 weeks, while a comprehensive audit of a large AI portfolio may extend to 8 weeks. We scope based on your risk profile and deadlines.

How is this different from internal audit?

External AI specialists bring independent expertise, regulatory insight, and specialised methodologies that complement internal audit capabilities. Many organisations co-source AI audits, working collaboratively to build internal audit team capability through knowledge transfer.

Related Services

AI Governance Consulting

Build comprehensive AI governance frameworks that satisfy regulatory requirements.

Learn more →Third-Party AI Risk Management

Assess and manage risks from AI vendors and embedded third-party AI systems.

Learn more →Regulatory Compliance

Navigate overlapping AI compliance requirements across APRA, ASIC, and Privacy Act.

Learn more →Ready to Address Your AI Governance Gaps?

Independent assessment provides assurance that your AI systems meet regulatory expectations and operate effectively, fairly, and with appropriate governance.

Initial consultation at no obligation | Fixed-price engagements | Board-ready deliverables